The Data-Ready Factory Starts With Trust

Manufacturing executives who master data governance and interoperability will define the competitive landscape of the next industrial decade.

TAKEAWAYS:

● Manufacturers that treat data governance as a strategic capability to gain measurable advantages in operational agility, forecast accuracy, and capital allocation.

● Interoperability is the prerequisite for autonomous decision-making, and organizations that cannot bridge their data silos see diminished returns on AI investments.

● Data mastery starts with shared definitions and ownership, aligning people, processes, and architecture before selecting any platform or technology.

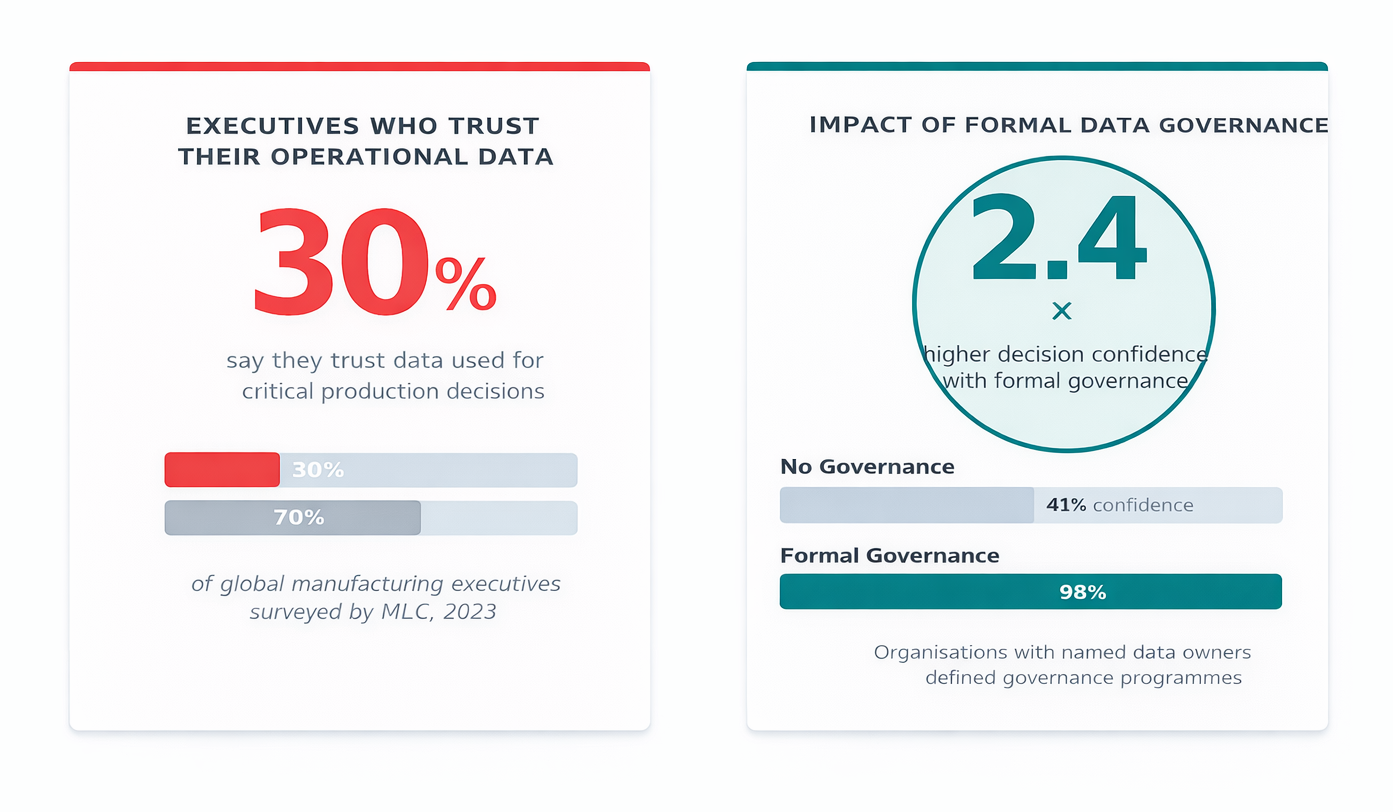

There is a paradox at the heart of modern manufacturing. Factories today generate more data than at any point in industrial history. Terabytes of sensor readings, production events, quality flags, supply signals, and energy metrics flow continuously through operations that would have been unimaginable a generation ago. Yet, when executives are asked whether they trust the data they use to make decisions, fewer than a third express confidence.

This gap between data volume and data confidence is not a technology problem; it is a governance problem. Closing it has become one of the most consequential leadership challenges of the decade.

Why Data Trust Has Become the Central Issue

The ambitions of Manufacturing 4.0/5.0, autonomous scheduling, predictive quality, and AI-driven supply chain optimization, all rest on a single premise: that the data underpinning those algorithms is accurate, timely, and consistent across the enterprise. When it is not, the consequences are not merely inefficient, they are actively misleading.

A 2023 study by the Manufacturing Leadership Council (MLC) found that data quality and integration ranked among the top three barriers to advanced analytics adoption across a global panel of manufacturing executives. The same research—available in the MLC’s State of Smart Manufacturing Report—noted that organizations with formal data governance structures reported 2.4 times higher confidence in their production decisions than those without.

This matters because the cost of bad data in manufacturing is not abstract. For example, when a scheduling algorithm relies on yield data that has not been reconciled across shifts, the resulting plans will appear optimal on paper but fail on the floor. Similarly, when procurement models ingest supplier lead times from multiple systems that define “confirmed order” differently, inventory buffers expand and working capital erodes. The downstream effects of these upstream data ambiguities compound silently until they surface as a missed quarterly target.

Figure 1: Data Trust and Governance Maturity in Global Manufacturing Operations

The Data Confidence Gap share of manufacturing executives who trust data used for operational decisions vs. those who have formal governance structures in place. Source: Manufacturing Leadership Council, 2023.

The Three Dimensions of Data Mastery

Data mastery in manufacturing is not a destination; it is an operating discipline built across three interconnected dimensions: governance, quality, and interoperability. Each is necessary, yet none is sufficient alone.

Governance establishes the rules of the road: who owns which data, what it means, how it is measured, and who is accountable when it is wrong. Without governance, even the most sophisticated data infrastructure becomes an expensive source of competing truths. The critical insight for manufacturing executives is that governance is fundamentally an organizational design challenge, not a software configuration. It requires naming data owners—not merely nominal data stewards—but senior leaders who bear consequences when the numbers are unreliable.

Data governance is not a technology initiative. It is a leadership decision about who owns the truth.

Quality is governance in motion. It means building operational processes that catch errors at the source—at the machine, at the shift handover, at the supplier portal—rather than attempting to clean data downstream after it has already influenced decisions. Leading manufacturers are embedding data quality metrics directly into operational performance scorecards, treating a data error rate the same way they treat a defect rate: as something that carries an owner, a target, and an escalation path.

Interoperability is the architectural expression of governance and quality. It is the capacity of data to flow accurately and meaningfully across systems—from production execution to enterprise planning, from plant to boardroom—without losing fidelity or requiring manual translation. This is where most organizations are furthest behind, and where the consequences of inaction are becoming most acute.

The Interoperability Imperative

The average large manufacturer operates with dozens of disconnected systems across its production and supply chain footprint, including programmable logic controllers (PLCs), supervisory control and data acquisition (SCADA) systems, manufacturing execution systems (MES), enterprise resource planning (ERP) systems, and increasingly, cloud-based analytics environments. These systems were implemented at different times, by different teams, and with different data models, resulting in an architecture that cannot support the decision velocity that competitive manufacturing now demands.

Consider what autonomous production optimization actually requires. A system that can recommend a schedule adjustment in response to an upstream supply disruption needs to simultaneously read inventory positions from ERP, current work-in-progress status from MES, equipment availability from maintenance systems, and energy pricing signals from utility feeds. This information must be contextualized and available with sufficient confidence to act without human validation. This is not a challenge any single system can solve; it is an interoperability challenge.

Data mastery in manufacturing is not a destination; it is an operating discipline built across three interconnected dimensions: governance, quality, and interoperability.”

The emergence of open standards, including ISA-95, OPC-UA, and the IDTA Asset Administration Shell, has created a more navigable path toward semantic interoperability than existed five years ago. But standards adoption alone does not solve the problem. It creates the conditions under which the problem can be solved—if the governance foundations are already in place.

Organizations that have made meaningful progress on interoperability share a common characteristic: they started with alignment on what data means before they invested in infrastructure to move it. They built shared data dictionaries. They mapped the lineage of key operational metrics—where a number originates, how it is transformed, and where it lands—and they resolved definitional conflicts at each step before connecting systems.

Framework for the Path Forward

For manufacturing leaders assessing their data readiness, the following framework offers a practical starting point:

- Audit the definitional layer first. Before discussing platforms, map the 10 to 15 most critical operational metrics—overall equipment effectiveness (OOE), yield, schedule adherence, and lead time—and determine whether they are defined consistently across every system and site that uses them. Most organizations find they are not.

- Assign data ownership at the executive level. Every critical data domain should have a named owner—not an IT team, but an operational leader whose performance is affected by the reliability of that data. This single structural change has more impact on data quality than any tooling investment.

- Treat integration as infrastructure, not as a project. Organizations that achieve durable data flow do so by adopting architectural patterns—data fabric, unified namespace, or semantic layer approaches—that decouple data sharing from individual system upgrades.

- Measure data health as an operational key performance indicator (KPI). Data quality metrics—completeness, accuracy, timeliness, and consistency—should appear on operational dashboards alongside throughput and quality. What gets measured gets managed.

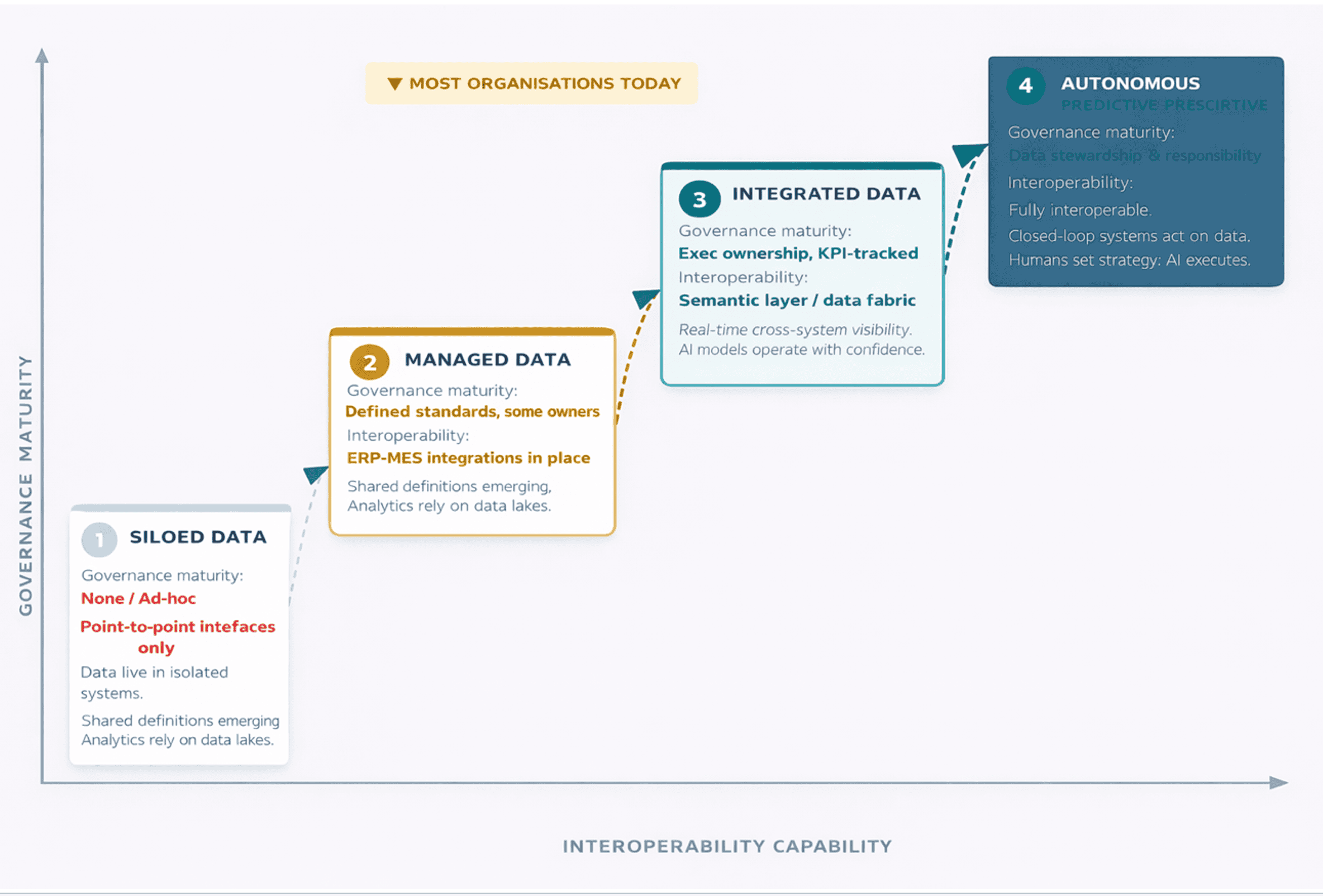

Figure 2: Industrial Data Maturity: From Siloed to Autonomous

The Data Mastery Maturity Model four stages from siloed data to autonomous decision intelligence, mapped against governance maturity and interoperability capability. Source: Original illustration

The Leadership Imperative

Data governance is not a program that manufacturing IT departments can manage in isolation. The organizations making the most progress are those where the chief operating officer, or an equivalent executive, has placed data quality on the same agenda as safety, quality, and delivery, treating it not as a technology initiative, but as an operational discipline with corresponding accountability structures.

The logic is straightforward. If the purpose of a Manufacturing 4.0 investment is to improve decision-making to enable better planning, faster responses to disruption, and more precise capital deployment—then the quality of the data informing those decisions is not a mere technical detail. It is the foundation. Every advanced algorithm is only as reliable as the data it consumes.

“Data governance is not a program that manufacturing IT departments can manage in isolation.”

The manufacturers who will define the competitive landscape of the next decade are not necessarily those with the most sophisticated AI or the most connected factories. Rather, they are those who have built the discipline of data trust deeply into their operations, ensuring that applied intelligence amplifies human judgment instead of distorting it.

The factory of the future runs on data, but it runs well only when that data is governed, mastered, and shared with integrity. M

About the author:

Prashanth Mysore is Global Strategic Business Development Senior Director at Dassault Systèmes.

Sources:

— 2023 State of Smart Manufacturing Report — Manufacturing Leadership Council

— ISA-95 Enterprise-Control System Integration Standard — ISA

— Asset Administration Shell Specification — IDTA