From Legacy to Leading Edge: Real Stories of Data-Driven Transformation

Four company stories highlight the essential role of effective data management and analysis for competitive advantage.

TAKEAWAYS:

● Companies struggle with outdated legacy systems and practices; they must modernize to gain both short- and long-term advantages.

● Establishing a data-driven culture requires a delicate balance of technology, people, and processes aligned to unlock the data’s value.

● Companies need to embrace and leverage AI to maximize value from their data.

In an era where data reigns supreme, organizations are reevaluating their data strategies. The challenge lies not only in collecting vast amounts of information but also in transforming that data into actionable insights that drive meaningful change.

Many companies are overwhelmed, ensnared by legacy systems and outdated practices. Others are boldly stepping into the future, harnessing the power of analytics and artificial intelligence (AI) to redefine their operations.

The journey toward a data-driven culture requires a delicate balance of technology, people, and processes, all aligned toward a common goal: unlocking the true potential of data. As organizations embark on this path, they recognize that success depends not only on the tools they deploy but also on the foundational elements that support them.

What does it take to cultivate a thriving data ecosystem? How can companies navigate the complex terrain of data management, governance, and analytics to foster a culture of innovation? The answers lie in the diverse experiences of organizations that have ventured down this path, each facing its own unique challenges and triumphs.

Solving the Puzzle

The following client stories provide insights into the strategies employed by four different companies, each addressing unique challenges in data management and analytics. Through these narratives, we will explore how they confronted their obstacles, leveraged technology, and ultimately redefined their operational frameworks. Together, these stories not only highlight the diverse approaches to data transformation but also illustrate how each piece is essential for achieving a fully optimized system.

Breaking the Mold: Reinventing Data Management

Imagine a prominent chemicals company, rich in history and innovation, suddenly realizing that its data infrastructure is hindering its progress. With a patchwork of outdated systems and siloed information, the organization faces a daunting challenge: how to leverage data to fuel its ambitious growth strategy. It becomes clear that a comprehensive enterprise data strategy is not just a nice-to-have; it is essential for survival in a competitive market.

The work begins with a deep dive into the company’s IT setup. A thorough review reveals that the organization lacks a mature data foundation, which is vital for effective visibility and decision-making. Specifically, there are no clearly defined data products—domain-specific solutions that aggregate and present data in ways that align with strategic business needs across various functions such as procurement, supply chain, and finance.

The lack of a structured data framework prevents the current operating model from scaling to meet future demands. Leadership faces challenges due to a lack of actionable metrics and key performance indicators (KPIs) essential for informed decision-making across the value chain.

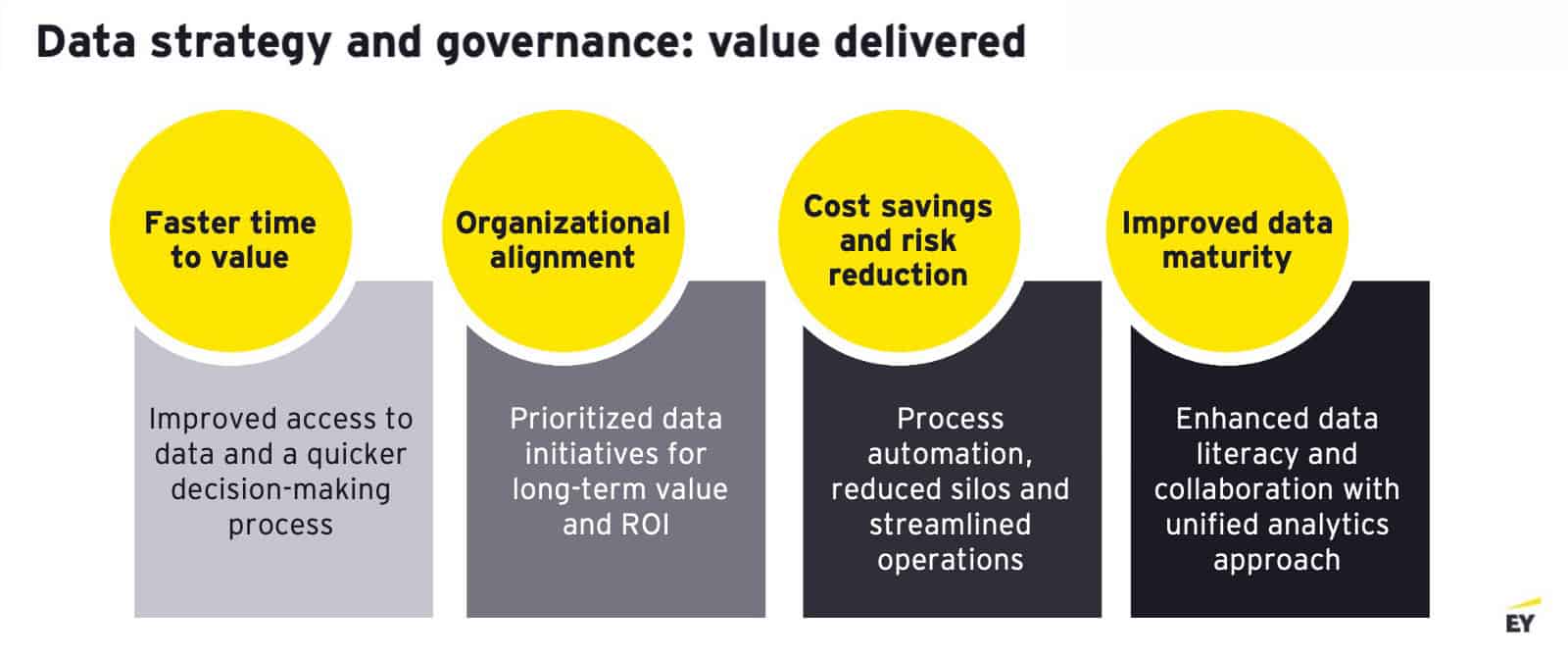

This critical moment sparks a pivotal decision: to seek external expertise to help the company develop a comprehensive data strategy. The goal is to build a robust, domain-specific data foundation that improves visibility and empowers executive leadership to make informed, data-driven decisions. By enhancing their data maturity and investing in business intelligence and reporting capabilities, the organization can transform its approach to data management and analytics.

“The chemicals company’s experience exemplifies the importance of treating data as a strategic asset.”

Business units gain the autonomy to access and analyze data independently, decreasing their reliance on IT. This newfound independence allows teams to generate insights on their own, resulting in faster decision-making and a greater sense of ownership over their data.

As this transformation unfolds, the company experiences immediate benefits. Engagement with business stakeholders increases, and there is a clear sense of direction in data initiatives. By aligning their data strategy with strategic business goals and investing in the right technology, they unlock value previously trapped in legacy systems.

The chemicals company’s experience exemplifies the importance of treating data as a strategic asset. By embracing change and fostering a culture of data-driven decision-making, they not only laid the foundation for future growth but also positioned themselves to capitalize on new opportunities in an ever-evolving landscape. Their story serves as a powerful reminder that with the right mindset and strategy, organizations can turn their data challenges into catalysts for success.

Key Learnings: Establishing a robust data management operating model is crucial for creating a scalable foundation that aligns with strategic business goals and promotes a culture of data-driven decision-making. It’s also essential to build solutions around the data currently available, even if it isn’t perfect, rather than waiting for flawless data before taking action.

Transforming Data, Realizing Value: Quick Wins in a Long-Term Strategy

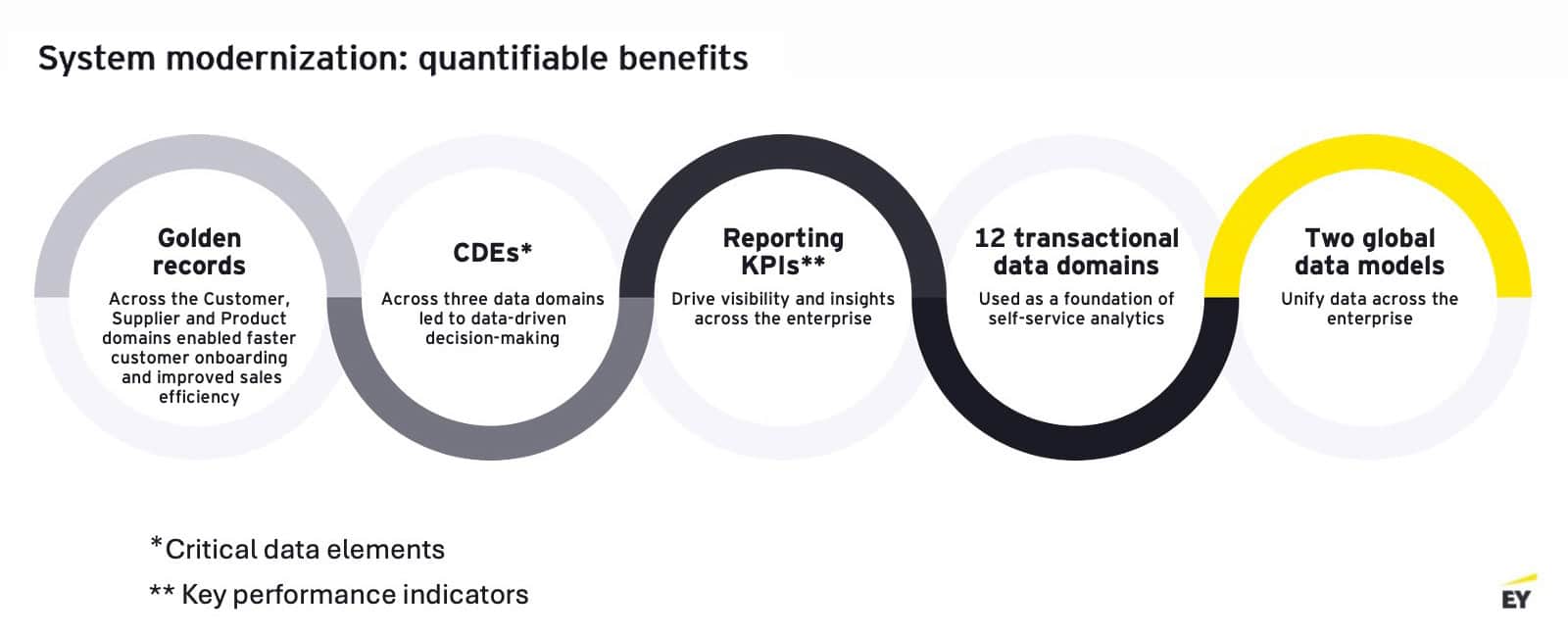

Faced with the challenge of consolidating over 70 legacy systems, a leading water management company embarked on an ambitious project to unify these disparate systems into a single, streamlined solution. The stakes were high, as the company had invested millions in a new enterprise resource planning (ERP) system, but the true value of that investment remained untapped, waiting to be unlocked through effective data management and analytics.

As it began this ERP overhaul, the company quickly realized that merely replacing old systems wouldn’t suffice. They needed a strategy that enabled real-time access to and use of their data, even during the transition. This realization led to a pivotal decision: to implement a unified layer of analytics that would connect their legacy systems with the new ERP system.

The project commenced with a focus on user experience. The company introduced a dashboard that provided employees with seamless access to data, regardless of its source—whether from the legacy systems or the new ERP. This innovative approach allowed users to continue their work without interruption, as the outdated systems were phased out, enabling them to derive insights and make informed decisions without waiting for the entire ERP consolidation concludes.

“By prioritizing data accessibility and fostering a culture of analytics, the water management company not only navigated its transformation successfully but also positioned itself for future growth.

As the project progressed, the company experienced a shift in mindset. It moved away from the traditional waterfall approach, where analytics was often an afterthought, and instead made data a central component of its strategy. This proactive approach allowed the company to realize value almost immediately, achieving 80 percent to 90 percent of its desired outcomes even before the full implementation was completed.

The benefits were clear. The company enhanced the visibility of inventory management across its manufacturing sites, facilitating better planning and resource allocation. The company leveraged its data to drive operational efficiencies and enhance decision-making processes, all while maintaining a focus on the ultimate goal of achieving a fully optimized ERP system.

This water management company’s journey exemplifies the power of innovative thinking in addressing complex challenges. By prioritizing data accessibility and fostering a culture of analytics, the company not only navigated its transformation successfully but also positioned itself for future growth.

Key Learning: Implementing a unified layer of analytics enables companies to access and leverage data in real time, effectively bridging the gap between legacy systems and new technologies.

Embracing AI for a Modern Workforce

The journey at this global manufacturing company began with a leadership change, marked by the arrival of a new chief information officer (CIO) who recognized the urgent need for ERP modernization. With a significant overhaul of ERP on the horizon, the company faced the challenge of integrating numerous legacy systems across its manufacturing and distribution operations in North America. The imperative was clear: how could the company modernize its digital capabilities to keep pace with the evolving market?

The CIO quickly pinpointed a fragmented data ecosystem as a major obstacle, resulting from inadequate technology investments over the past decade. However, this realization also revealed numerous opportunities for innovation across various business functions, including aftermarket service, sales, warranty, and supply chain. The focus shifted to two critical objectives: building an enterprise data platform to enable a data-driven culture and incorporating AI into daily decision-making processes.

Initial plans for the AI strategy began to take shape during an innovation session, where the leadership team envisioned an AI platform as a tool for the company to learn and apply AI to its daily operations. This vision generated excitement and ownership around the initiative, marking the start of a transformative journey aimed at harnessing AI’s potential while establishing a robust data foundation.

“Motivated by the potential for a more conversational interface, the manufacturer aimed to leverage GenAI to streamline and improve service delivery.”

As planning progressed, the company’s existing structure required a careful approach to bridge the gap between employees and leadership, often from different geographies. Additionally, the implementation of AI tools required multilingual support to accommodate leaders who preferred communication in other languages. This dual focus on culture and language was essential for fostering acceptance and engagement.

As a manufacturing entity, the organization also grappled with the complexities of fragmented IT systems and siloed operations. Change management became a crucial aspect of the deployment, as building trust in the new systems proved challenging. It was recognized that without intentional investment in internal buy-in, the rollout could face roadblocks.

To empower the workforce, practical AI solutions were introduced to enhance daily operations. Simple yet effective use cases, such as summarizing virtual meetings and drafting emails, demonstrated the immediate benefits of AI integration. For instance, finance leadership leveraged the platform to analyze financial data securely, enabling quicker identification of gaps and opportunities. These early successes demonstrated the value of AI, fueling momentum for long-term initiatives and reinforcing the commitment to digital transformation.

Key Learnings: Successful AI integration requires strong cultural alignment, intentional change management, and practical applications that empower employees to embrace new technologies.

Revolutionize the Dealer Experience

This manufacturer’s story highlights proactive investment and strategic foresight. Over the past decade, the company has established a robust data foundation, cultivating a best-in-class ecosystem for managing connected products and fleet operations. With a high position on the data maturity scale, the company recognized the potential of generative AI (GenAI) to enhance its offerings, particularly in fleet management and aftermarket growth.

The rise of GenAI prompted leadership to explore how these technologies could transform the dealer experience. Although existing digital applications were effective, service technicians faced challenges navigating multiple screens for troubleshooting. Motivated by the potential for a more conversational interface, the company aimed to leverage GenAI to streamline and improve service delivery.

This intentional approach to innovation was reflected in the development of a service technician application that uses legacy knowledge documents and service manuals. By simplifying processes and enhancing customer service, the goal was to demonstrate the value of AI to leadership and secure further investment for scaling these initiatives.

Continuous feedback loops with the dealer network underscored the importance of customer-centricity. The company recognized that a strong advisory board could facilitate direct communication, ensuring dealer insights were integrated into the development process. This collaborative approach defined success criteria and fostered a sense of ownership among dealers, enhancing the overall experience.

There was also acute awareness of the need for responsible AI deployment. As the manufacturer embraced GenAI, it prioritized data security and governance, establishing guardrails to mitigate risks associated with external rollouts.

As with any transformative initiative, challenges arose. The complexity of coordinating cross-functional teams and managing diverse stakeholder expectations necessitated an iterative approach. By piloting solutions early and gathering feedback, the manufacturer ensured that innovations aligned with user needs and expectations.

Key Learnings: Leveraging AI for growth requires a customer-centric approach, continuous feedback loops, and a commitment to responsible innovation that addresses both opportunities and risks.

Conclusion

Organizations increasingly recognize the vital role of effective data management and analytics. Embracing modern technologies like AI and unified analytics enables companies to drive value through operational efficiencies. As they navigate the future, companies should consider how their data strategies can not only yield immediate results but also shape their long-term vision and purpose. M

The views reflected in this article are those of the authors and do not necessarily reflect the views of Ernst & Young LLP or other members of the global EY organization.

About the authors:

Faisal Alam is EY Americas industrials and energy technology leader.

Farooque Munshi is EY Americas data and AI advanced manufacturing leader.

Gundeep Singh is principal, industrial products, Ernst & Young LLP.

Ellen McNally is manager for EY US technology consulting. She contributed to this article.

5 Key Questions on the Path to Industrial DataOps

Industrial DataOps enable manufacturers to be more agile, improve continuously, and move toward smart manufacturing.

TAKEAWAYS:

● Industrial DataOps solutions enable real-time data flows across hardware and software.

● Manufacturers can use data connectivity to respond quickly to emerging risks and constraints across their facilities and the supply chain.

● Manufacturers need to focus on turning raw data into useful information to drive decision-making.

Most manufacturers are on a technology journey, increasingly adopting automation, analytics, and active sensors. In the next two years, 41 percent of manufacturers plan to prioritize investments in factory automation hardware, and 40 percent plan to invest in analytics, according to Deloitte’s 2025 Smart Manufacturing and Operations survey.

The common denominator for these and other technology investments is data. As manufacturers advance, there is a fast-growing imperative to establish foundational capabilities in data quality, connectivity, and access. One effective approach to achieving this is through Industrial DataOps.

Many enterprises are currently working to leverage a unified namespace (UNS), an architectural strategy that centralizes data sources into a single source of truth. A UNS provides a real-time view of the business, and Industrial DataOps facilitates this process at scale. As data silos diminish through a UNS, Industrial DataOps solutions enable real-time data flows across hardware and software, whether at the edge, on-premise, or in the cloud. This robust data foundation paves the way for enhanced agility in the face of constraints, fosters new insights for continuous improvement, and supports the transformation to smart manufacturing.

In adopting an Industrial DataOps strategy, manufacturers should consider five essential questions:

- What criteria should shape our data product priorities for long-term impact?

- How can we foster local innovation without sacrificing global data standards for creating silos?

- How does talent and structure limit our ability to turn data into actionable information, and how can we bridge those gaps?

- What cultural and operational shifts are needed to embed and scale DataOps sustainably?

- How can we design our DataOps strategy to support future AI needs while managing data quality and governance?

1. Shaping Data Priorities for Long-Term Impact

With DataOps, manufacturers convert centralized information into actionable insights. Manufacturers grapple with large, complex data flows from IT and operational technology (OT) infrastructure. With numerous opportunities available, it can be challenging to determine where to begin.

To achieve widespread DataOps use, a data product strategy can be implemented incrementally, capturing value along the way. Initially, manufacturers might focus on the factory floor, developing data products related to bills of materials, parts and inventory, and work instructions. These early solutions demonstrate value, foster relationships and repeatability, and lay the groundwork for more ambitious DataOps applications.

“To achieve widespread DataOps use, a data product strategy can be implemented incrementally, capturing value along the way.”

Once progress is made at the factory level, manufacturers can broaden their focus to data products that span facilities. These might include supply chain data products to clarify incoming supply and demand, products for product lifecycle management (PLM) as it relates to engineering, products for orders and customer demand, and downstream products for quality control and aftermarket service.

Ultimately, manufacturers should start by identifying specific business problems that data products can resolve while also envisioning how to maximize value delivery at scale.

2. Foster Local Innovation without Sacrificing Standards

The emergence of generative AI (GenAI) has sparked a wave of experimentation across various levels, from the factory to the enterprise. New proofs of concept (POCs) have emerged in many areas, raising challenges around AI governance and the coordination of experiments within a broader, strategic framework. Similarly, DataOps presents the challenge of balancing innovation with control. Organizations must grant teams a degree of freedom to experiment while also ensuring adherence to standards critical for maintaining interoperability, scalability, and data quality.

Discovering where DataOps can unlock visibility may be best approached with both top-down and bottom-up strategies for identifying opportunities. For example, building data products for PLM is a global initiative, as products should be consistent across the manufacturing footprint.

Conversely, when it comes to OT data within the factory walls (e.g., from SCADA systems), manufacturers can manage data products at the facility level while leveraging tools and frameworks defined at the enterprise level. This approach allows for innovation within facilities that is permitted, governed, and strategically aligned.

3. Bridging Gaps and Turning Data into Action

Implementing DataOps requires specific technical skillsets. Manufacturers will need data engineering talent to plan and implement a UNS, as well as data architects to manage data structure. Cloud computing skills are essential for setting up platforms and infrastructure to capture the right data (rather than all data).

“Some manufacturers may pursue building services and solutions based on their data products, making the development of DataOps a core competency.”

However, merely collecting data is not sufficient. Manufacturers must also focus on transforming raw data into meaningful information that can drive decision-making and insights. This requires data owners within the business who are accountable for managing and maintaining data quality, context, and relevance. In the long term, as the data foundation supports increasingly ambitious automation, there will likely be a heightened demand for skilled talent in data science, machine learning, and AI modeling.

To attract top candidates, manufacturers may need to increase pay rates and ensure their global workforce strategy is guided by leaders with robust skills and experience in data and technology.

4. Cultural and Operational Shifts for DataOps

Even with the right skilled labor, adopting a UNS and DataOps can be challenging. Manufacturers will require an ecosystem of partners and providers with the necessary tools and capabilities to support their journey. Some manufacturers may pursue building services and solutions based on their data products, making the development of DataOps a core competency.

For most manufacturers, however, this domain is often deprioritized because, while it is foundational, it typically does not serve as an immediate performance differentiator. Achieving success requires a balance between daily operations, limited talent and funding, and the complexities of data product development and management.

Manufacturers must make important decisions regarding where to deploy, when to scale, how much control to retain or delegate, and how to coordinate within the strategic ecosystem. Engaging partners to manage DataOps on behalf of the manufacturer may offer an expedient, cost-effective way to access the right talent and competencies.

5. DataOps Strategy Design for Future AI Needs

AI relies on high-quality inputs, and a UNS with well-designed data products transforms raw data into assets ready for AI training and deployment. The ability to manage data in a repeatable, cost-effective way is essential for realizing AI’s full potential value. For instance, if a manufacturer seeks to deploy a sensing system on the factory floor to detect and correct human error, such a deployment requires the right data products to ensure repeatability.

“Investing in DataOps enables manufacturers to build predictable data sets where scale is known, and data are governed and owned.”

Some manufacturers have explored limited AI deployments that are relatively straightforward. For example, a GenAI-enabled chatbot navigating work instructions is a deployment that requires minimal DataOps. However, an ambitious smart manufacturing vision does require DataOps, and as Deloitte research shows, nearly all (92 percent) manufacturers view smart manufacturing as the primary driver of competitiveness over the next three years. From this perspective, DataOps is a competitive imperative.

Investing in DataOps enables manufacturers to build predictable data sets where scale is known, and data are governed and owned. This level of data maturity is essential for the future of smart manufacturing. Longer term, DataOps serves as the gateway to developing fully autonomous agentic systems and unlocking the greatest rewards from software defined manufacturing.

Compounding Outcomes with Industrial DataOps

Industrial DataOps will become increasingly important as more devices and factories come online. Connectivity and real-time data access allow manufacturers to extract more value from their data today, with even greater longer-term potential.

Data connectivity and access help manufacturers understand and quickly respond to emerging risks and constraints across facilities, the supply chain, and the marketplace. For continuous improvement, DataOps highlights areas where plans and operations can be enhanced or adjusted. Additionally, data quality and a foundation for AI use cases can help mitigate skilled labor shortages and attract new talent to the industry.

Data are a strategic asset, and like any enterprise asset, it must be leveraged to achieve business goals. Industrial DataOps serves as a vital bridge between the rapidly growing data flows of today and ambitious smart manufacturing visions of tomorrow. M

About the authors:

Patricia Henderson is a principal in AI & Data with Deloitte’s Industrial Products & Construction Practice.

Rohini Prasad is a manager in Deloitte’s Strategy and Operations Group with Supply Chain & Network Operations.

Survey: Manufacturers See Data, Lack Strategy

Despite persistent challenges, data has made measurable impacts on performance and decision-making.

KEY TAKEAWAYS:

● Manufacturers recognize that data is the essential fuel for digital transformation, but without governance and analytics, they lack the “map” to turn data into strategy.

● While many companies report measurable gains in efficiency, cost reduction, and decision-making from data use, nearly half still lack a corporate-wide governance plan and processes to ensure data quality.

● Disparate systems, legacy equipment, and limited organizational data skills remain major obstacles, yet manufacturers increasingly view data as a quantifiable business asset tied to strategic outcomes.

Manufacturing data is the commodity that promises manufacturers a pathway to operational excellence. It can also act as an accelerant toward discovering new markets, new customers and innovation for both products and processes. Coupled with the urgency that many companies feel to bring AI into the business, there is no question that data is the necessary fuel for the digital transformation journey.

But if data provides the pathway, then governance and analytics are the map. Creating a map that is accurate relies on validated, contextualized, high-quality data. But met with the common challenges of disparate systems, legacy equipment, and a lack of organizational data skills, many manufacturers are instead left frustrated that the wayfinding they so desire is just out of reach.

Despite those challenges, manufacturers are seeing significant progress in utilizing data to make better decisions, while also increasing the frequency with which those data-driven decisions are made. They are seeing improvements in cost reduction, speed and quality. A growing number of companies are determining how to best put a quantified value on their data, adding a direct tie to the bottom line.

For manufacturers who diligently strive toward forging that data-driven pathway, improved business outcomes lie on the route ahead.

SECTION 1: Data is Valuable – Strategically and Financially

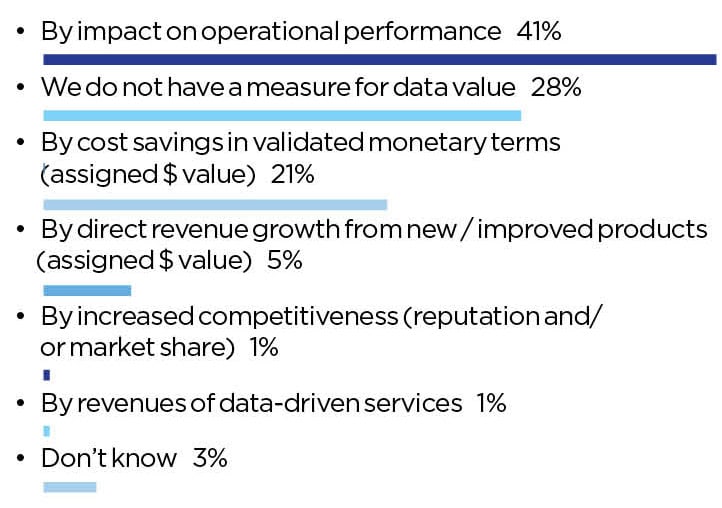

It could be said that data, like happiness, really can’t have a price tag, because it is by nature priceless. In the quest to assign its best value to align with business goals, companies value data differently. The greatest share of respondents reported that data’s value was measured by impact on operational performance (41%), while around a fifth (21%) said that data is assigned a dollar value.

Still, nearly a third of respondents (28%) said that they do not have any such measure for their manufacturing data (Chart 1). Without such quantifiable measures, leaders can find it challenging to gain support and investment for data-related investments or to initiate data pilot projects.

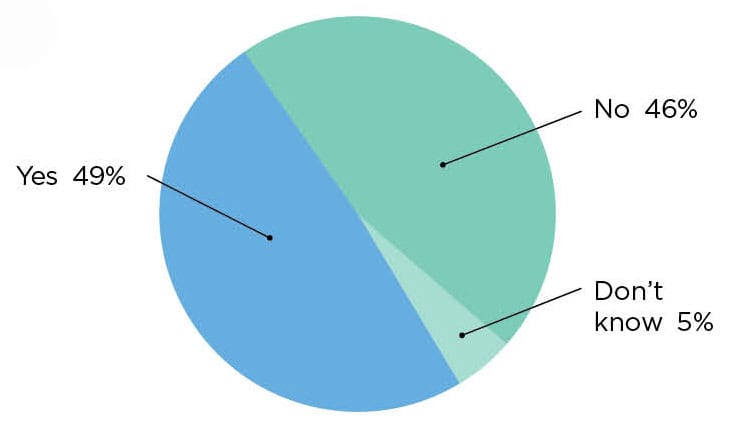

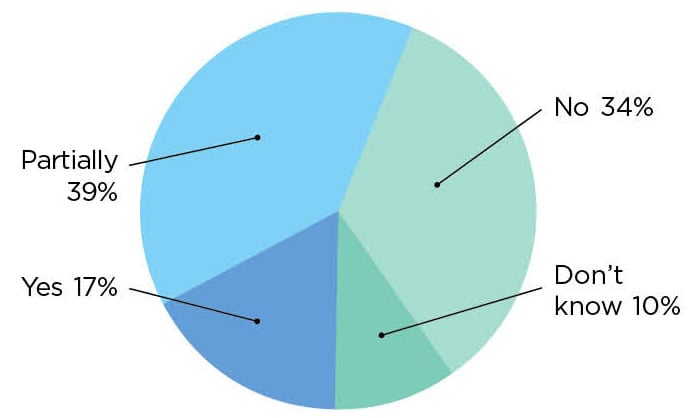

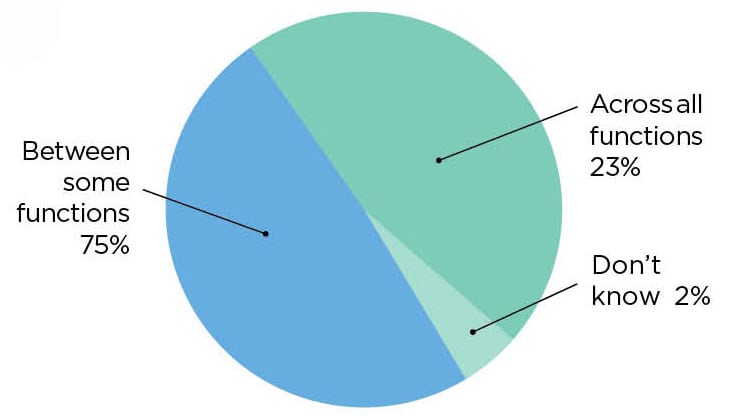

Likewise, the structure for making that aforementioned data-driven map is lacking at nearly half of all manufacturers—46 percent of respondents said that their companies lack a corporate-wide data governance plan (Chart 2). As data-sharing is fairly common for internal cross-functional teams (75%) (Chart 5), it seems likely that more companies will move toward a more strategic approach.

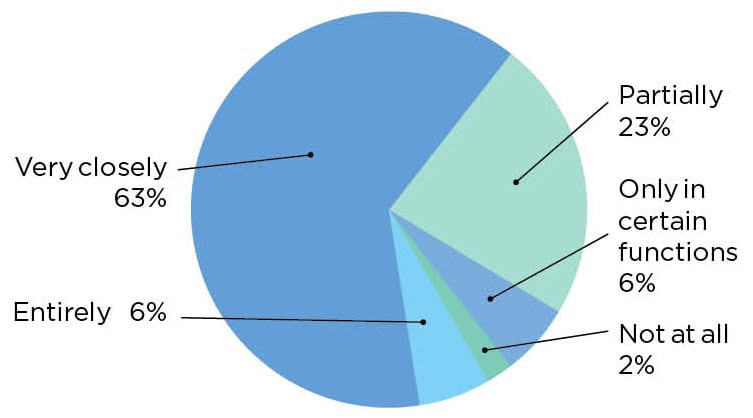

For companies that do have a data strategy, there is a payoff in alignment with overall corporate business strategy—70% say their company’s data strategy aligns with overall business strategy either entirely or very closely (Chart 3). And while only 17% of executives currently find their annual incentives or KPIs tied to data collection and management (Chart 4), it’s not hard to foresee a future where this becomes more ubiquitous.

1. Data is Valued by Performance and Cost Savings

Q: How do you measure the value of the data in your organization? (Select one)

2. Companies Divided on Corporate-Wide Governance

Q: Does your company have a corporate-wide governance plan, strategy, or formal guidelines for how data is collected, organized, accessed, and utilized across the enterprise, including manufacturing operations? (Select one)

3. Data Strategies Closely or Partially Align with Business Strategies

Q: How closely do you feel this data strategy is aligned to your company’s overall business strategy? (Select one)

4. Data Management has Tentative Ties to Executive Incentives

Q: Is data collection/management/analysis included in annual incentives, KPIs, or business imperatives for company executives/leadership? (Select one)

5. Cross-Functional Data Sharing Has Limited Reach

Q: Is data routinely shared cross functionally in your company? (Select one)

SECTION 2: The State of Data Sourcing and Quality

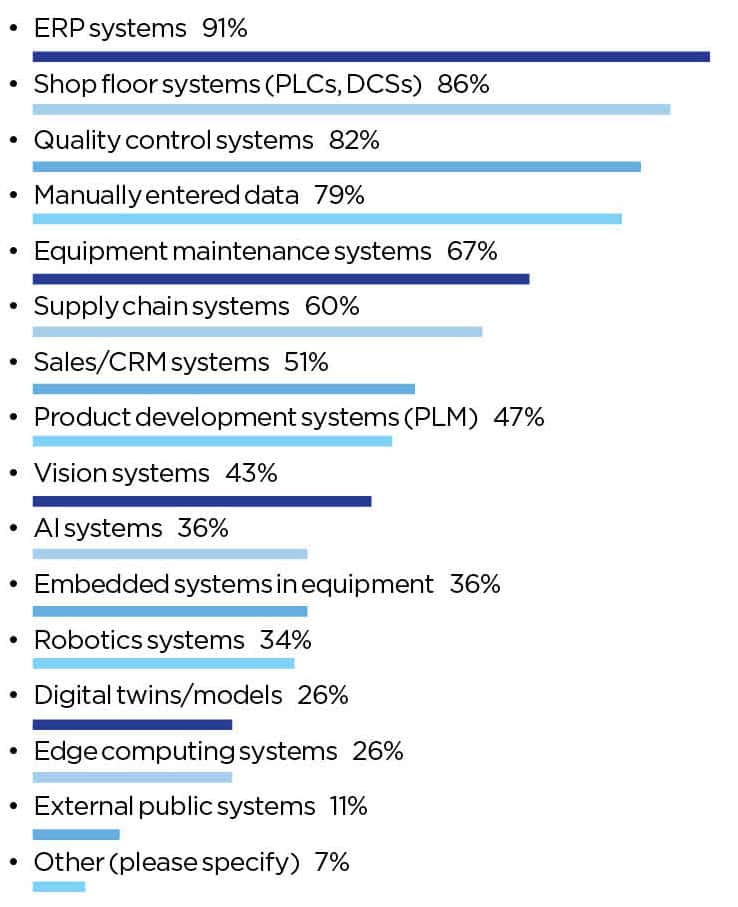

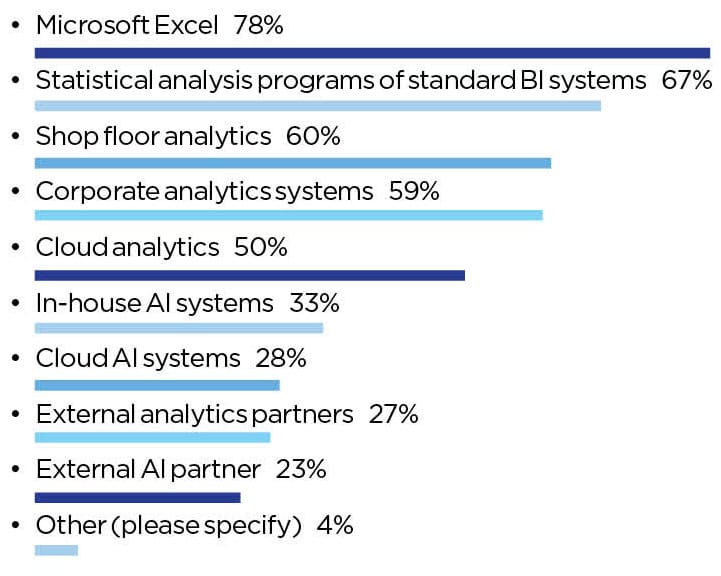

Most frequently, the well of manufacturing data is made up of enterprise-level data (ERPs), shop floor systems, and quality control systems (Chart 6). That said, 79% of manufacturers also include manually entered data—a potential source of data contamination downstream. Microsoft Excel still reigns as the undisputed champion for data analytics (Chart 7).

Respondents were somewhat pessimistic in their companies’ ability to collect the right data for business needs (Chart 8), but far more untrusting in the ability to collect AI-ready data (Chart 9). Alarmingly, only 39% said that their company has a process to verify data accuracy and quality (Chart 10).

6. ERP, Shop Floor and Quality Control Systems Lead the Data Supply

Q: What are the sources of your manufacturing data today? (select all that apply)

7. Microsoft Excel is the Most-Used Data Analytics Tool

Q: What systems do you use to analyze the manufacturing data you collect? (Select all that apply)

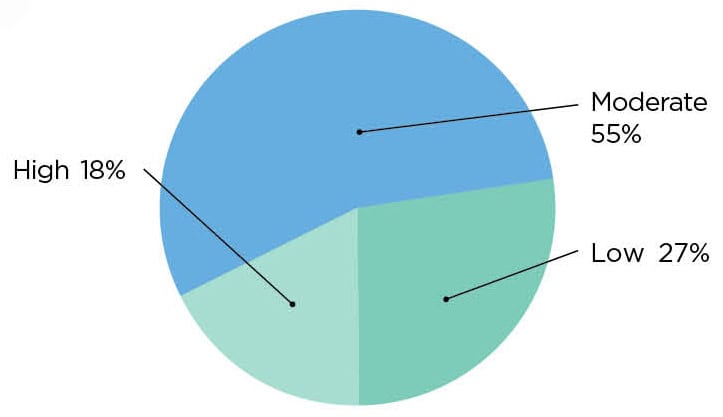

8. Companies are Moderately Skilled at Collecting the Right Business Data …

Q: How would you rank your company’s ability to collect the right data the business needs from your manufacturing operations? (Select one)

9. … But are Less Skilled at Collecting AI-Ready Data

Q: How would you rank your company’s ability to collect AI-ready data? (Select one)

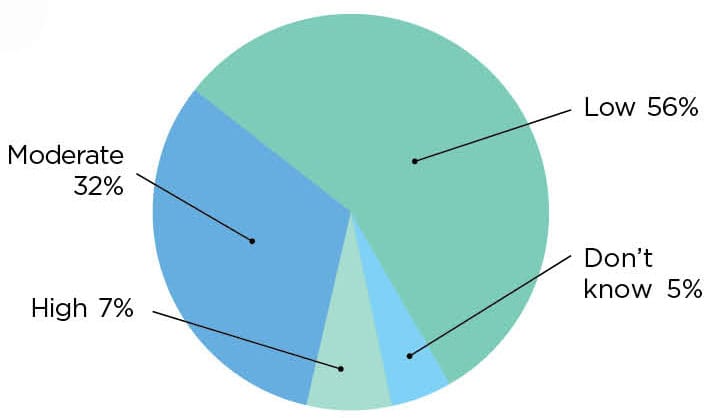

10. Only 4 in 10 Have a Process to Verify Data Accuracy or Quality

Q: Does your company have a process to verify the accuracy and quality of manufacturing data? (Select one)

SECTION 3: Data Has Proven Value – But Challenges to Realizing It

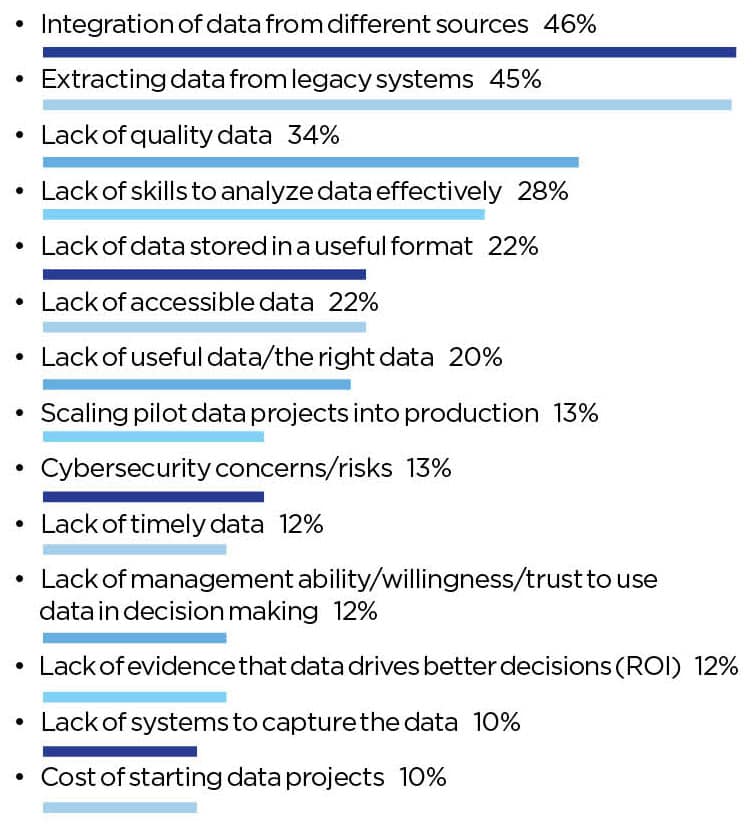

What stands between manufacturers and this promising data-driven future? The most persistent challenges include data from disparate sources (46%), the ability to extract data from legacy systems (45%), and the lack of quality data (34%). (Chart 14)

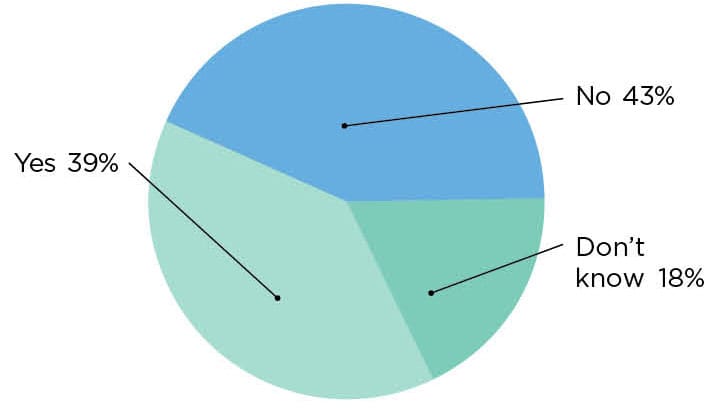

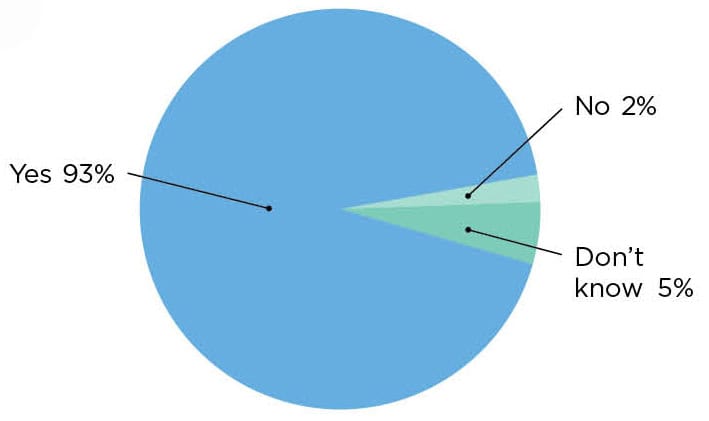

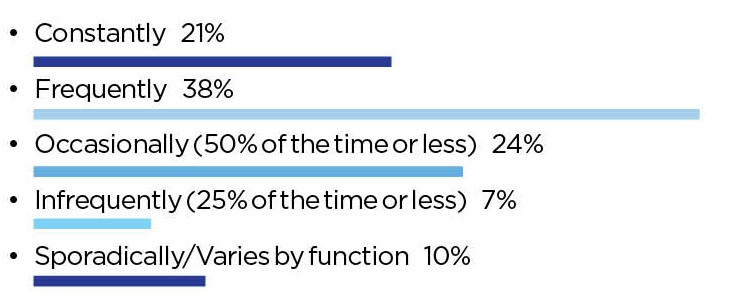

Despite the halting steps along the way, manufacturers are seeing true value from operational data. The highest level of impact has been seen on cost reduction, efficiency and quality. (Chart 11) Overwhelmingly, respondents say that data has improved their company’s decision-making process (93%). (Chart 12)

11. Cost Reduction, Speed and Quality All See Improvements

Q: What degree of impact has manufacturing data had in improving your manufacturing organization since the start of your digital journey?

12. 93% Say Using Data Improves Decision-Making

Q: Has the use of data improved your company’s manufacturing decision-making process? (select one)

13. Data-Driven Decisions Gaining in Frequency

Q: How often would you say your organization makes data-driven decisions today? (Select one)

14. Integration, Extraction, Quality Create Barriers to Leveraging Data

Q: What are the most important challenges or obstacles hindering your organization from making more data-driven decisions? (select top three)

About the author:

Penelope Brown is the Senior Content Director, Manufacturing Leadership Council

Digital Transformation Project Success Depends on Quality Data

Focusing on the quality of data is a critical factor determining the success or failure of transformational projects.

TAKEAWAYS:

● Successful transformational projects have enabled manufacturers to gain a competitive edge by lowering costs, providing better customer service and enabling new innovative business models.

● Not enough attention has been given to the impact that data and data quality have on determining the success or failure of transformational projects.

● Data considerations are becoming even more critical as AI is evolving to become a major component for all future transformational projects.

Whether called Industry 4.0, Manufacturing 4.0 or just digital transformation, it is well understood that manufacturers need to transform to compete effectivity and be prepared for the future. Embedded technologies with connected processes are enabling faster innovation, reduced latency and more resilience throughout their organizations. Successful projects have enabled manufacturers to gain a competitive edge by lowering costs, providing better customer service and enabling new innovative business models. The challenge is that not every digital transformation journey project that a manufacturer embarks on is successful. In a 2023 survey, the World Economic Forum noted that 70% of companies investing in Industry 4.0 technologies fail to ever move out of the pilot phase of the project. Bain and Company noted in 2024 that 88% of business transformations fail to achieve their original ambitions.

Much has been written about why projects fail with the predominate focus around the three pillars of people, process and technology. Lack of executive commitment, inadequate company resources, immature technologies and unavailable skillsets are all pointed to as reasons that projects fail. Another area where not enough attention has been given is the impact that data and data quality have had on determining the success or failure of transformational projects. Having been involved in many transformational projects, I have seen first-hand the impact that bad or incomplete data have had on the project success. Automating a business process with bad data only results in getting to the wrong result faster.

Data considerations are becoming even more critical as AI is evolving to become a major component for all future transformational projects. AI has always been heavily dependent on data; generating poor results when events such as forecasting, anomaly detection or planning optimization are based on bad data. The common phrase referring to garbage in and garbage out reflects the impact that bad data has on results. Now with generative AI, having the right quality data has become even more critical. With Large Language Models (LLMs) being trained on the public internet and with AI models built on incomplete or other suspect data, it should be no surprise that the results of a recent MIT study showed that 95% of AI pilot projects failed to deliver financial benefits. While there are other contributing factors including the isolated nature of these projects, the quality of the data directly impacts the results.

Understanding and proactively addressing data issues can bring business benefit and help resolve challenges before they have a negative project or business impact.

Understanding and proactively addressing data issues can bring business benefit and help resolve challenges before they have a negative project or business impact. A big driver for project failure is the lack of active user engagement or working outside the system as defined. Having a data strategy early in a project cycle is critical as obtaining user buy-in or encouraging reliance on the system becomes much more difficult once the users lack confidence in the results. For the users that want to do a good job but come to believe that the new system is built on or generates bad data, it can be difficult to ever get them successfully reengaged.

Having a comprehensive data strategy is critical to project success and requires an understanding of the challenges and opportunities for addressing those challenges. Related to digital transformation, some of the data quality elements that have a direct impact on transformation projects can include:

- Data accuracy

- Data timeliness

- Data context

- Data volume

- Data completeness

- Data uniqueness

Data accuracy refers to the correctness of each piece of data. For example, creating a digitized automated process that pushes a customer order through the system falls apart when the item ordered, the build lead time, the bill of material, or the required inventory levels are wrong. Any of these can cause the order to be delayed and the customer to be disappointed. Data accuracy can be improved in a variety of ways including manual checks, automated checks, audits, cycle counting, etc. User acceptance testing (UAT) with real data is a fundamental component of verifying data quality and is normally a key component of any project schedule.

Directly related to data quality, the timeliness of the data can determine whether the data being used is still accurate at the time it is needed. When ChatGPT first came out, it provided amazing results. However, many of the results were based on 1–2-year-old data providing incorrect results at the time of the request. That works well for learning about historical events such as the Viking journey to Iceland; however, it is not very useful in evaluating the current state of your supply chain or your supplier’s risk factors related to their financial health. Every process and data element should have a specific requirement related to the timeliness of the data. It is important to know the requirements of the business and develop a specific strategy defining the requirements that are supported by integrations, inputs, dependencies or data feeds that meet your needs. This strategy should be clearly communicated to support required business processes and to set expectations properly.

Having a comprehensive data strategy is critical to project success and requires an understanding of the challenges and opportunities for addressing those challenges.

Context is important. There was an injection molding company where connecting the equipment provided real-time results based on performance. The early results clearly showed that the evening and weekend shifts were under performing. It would be easy to attribute this to lack of supervision or employees slacking off. With additional data, it was determined that the real culprit was that the support staff including machine maintenance and material movement/stock support was lacking. Context is especially important when reporting on and providing data analysis. While AI is becoming a useful tool to provide additional context, the way that prompts are worded can have a direct impact on the resulting analysis.

While data is critical, having more data is not always better. If the data volume is so great that you are unable to analyze or make good use of it, it can be constraining. For example, IoT provides the connectivity that Industry 4.0 depends upon and is a key success factor for Industry 4.0 projects. The last few years, almost all manufacturing machines have multiple sensors for connectivity and companies have aggressively moved to connect their machines with IoT. While there have been good advancements in automated service or maintenance execution, the real benefit received across manufacturing companies has still been somewhat limited. One reason is that IoT produces large amounts of data that are difficult to analyze. The available analytical tools have not been robust enough to do so. New AI technologies now provide the ability to help resolve these challenges by being able to quickly review and analyze vast amounts of data to make sense of it.

Data completeness and uniqueness impact accuracy creating significant challenges that are made more difficult with a multitude of overlapping data source systems. Manufacturers are benefiting by consolidating systems to minimize required integrations to address this problem. Where this is not possible, a best practice is to have a single master for all data. While complimentary systems can serve to enrich the data, no data element should be mastered in more than one system. This is where a master data management strategy is leveraged to define where data will be housed and how it will be used. Planning for this at the beginning of a project reduces unforeseen issues later.

As manufacturers continue to embark on these transformational journeys to digitize their business and provide seamless connected processes, focusing on the quality of their data will be a critical factor determining their success. M

About the author:

John Barcus is Group Vice President, Manufacturing Industries & Emerging Technologies at Oracle.

The Industrial Data Foundation Imperative: Building Manufacturing’s AI Future

Manufacturing leaders must prioritize industrial data readiness and governance now, as the gap between data-ready organizations and laggards threatens future AI competitiveness.

TAKEAWAYS:

● Organizations with mature data foundations are achieving greater value from their AI initiatives compared to those rushing into AI without proper data preparation.

● Successful manufacturers are treating industrial data as a strategic asset, implementing governance frameworks that balance innovation with security and compliance.

● Companies that establish strong data contextualization and standardization practices are three times more likely to scale AI implementations successfully across their operations.

The manufacturing industry stands at a critical juncture. While AI promises transformative benefits, many organizations are discovering that their rush toward AI implementation is hampered by a fundamental challenge: inadequate industrial data management. Without a robust data foundation, even the most sophisticated AI initiatives will fail to deliver meaningful results.

The Hidden Complexity of Manufacturing Data

Manufacturing data presents unique challenges that distinguish it from traditional enterprise data. Unlike the structured information found in typical business applications, industrial data is predominantly time-series data originating from diverse sources including PLCs, machine controllers, IoT sensors, and legacy systems, and often exists in incompatible formats across facilities. This complexity results in the “industrial data management challenge,” which remains multifaceted and encompasses managing data contextualization, scaling and technical expertise requirements.

The consequences of poor data management are severe for AI initiatives. Organizations frequently find themselves unable to expand successful AI pilot projects beyond single use cases, struggling with integration bottlenecks that starve AI models of the data they need, and lacking the cross-facility standardization necessary for enterprise-wide AI deployment. Most critically, without proper industrial data foundations, industrial AI initiatives become expensive experiments rather than business transformations that deliver measurable ROI.

From Expert Dependency to AI Data-Driven Agility

The transformation toward data-driven manufacturing represents more than a technological upgrade; it is a fundamental business process evolution that prepares organizations for AI integration. Traditional manufacturing and supply chain operations rely heavily on sequential, expert-dependent decision-making where actions wait for specialist input, creating bottlenecks and limiting organizational agility. Data maturity enables a shift to concurrent decision-making models where multiple processes can operate simultaneously. It also enables real-time insights for employees to make better decisions, faster. Ultimately data maturity will also enable AI-driven automation to optimize systems and processes in real-time and solve problems with human-like reasoning but machine-scale efficiency.

This evolution naturally aligns with established lean manufacturing principles while creating the foundation for AI enhancement. While traditional lean practices rely on manual observation and periodic improvement events, data driven organizations can continuously monitor operations and automatically identify inefficiencies in real-time, setting the stage for AI systems to not just identify issues but autonomously resolve them. The traditional DMAIC (Define, Measure, Analyze, Improve, Control) cycle accelerates dramatically when supported by advanced analytics and AI as what once took weeks can be compressed into near-instantaneous insights and actions.

The Three Stage Journey to AI-ready Industrial Data Maturity

Successfully building an industrial data foundation for AI requires progressing through three distinct maturity stages, each unlocking new levels of AI capability, building upon the previous level’s capabilities.

Stage 1: Data Foundation – Preparing for Basic AI Applications

This stage focuses on establishing fundamental digital capabilities that enable initial AI deployment. Organizations transition from manual, paper-based processes to automated data collection systems while digitizing operational documentation. Key achievements include centralized production data repositories, real-time visualization of machine status, and basic KPI tracking across operations. At this stage, companies typically see immediate wins through reduced time spent and variation on data collection along with improved visibility into production metrics. From an AI readiness perspective, this stage establishes text-searchable operational documents, basic image repositories for quality and maintenance, and standardized data formats that AI models can consume. Organizations can begin implementing simple AI applications like automated quality inspection using computer vision or basic predictive models for equipment monitoring. The focus is on creating clean, labeled datasets that serve as training data for more sophisticated AI implementations in later stages.

Stage 2: Data Intelligence – Enabling Predictive AI

This stage introduces sophisticated analytics and cross-functional integration while implementing AI powered solutions. Organizations deploy predictive maintenance systems powered by machine learning, optimize production schedules using AI algorithms, and develop models that continuously improve process parameters. The hallmark of this stage is the ability to predict and prevent issues rather than merely react to them, a crucial capability for advanced AI applications. AI readiness advances significantly at this stage through established processes for validating AI generated content against domain expertise, initial implementation of AI assistants for technical documentation retrieval, and integration of structured operational data with unstructured documentation. Organizations can deploy chatbots that help operators access troubleshooting guides, implement generative AI for creating maintenance reports, and use AI to analyze quality patterns across multiple variables. Companies often report reductions in unplanned downtime and significant improvements in quality consistency as AI models learn to identify subtle patterns human experts might miss.

Stage 3: Intelligence Enterprise – Autonomous AI Operations

This stage represents the pinnacle of AI-enabled data maturity, where autonomous systems make real-time operational adjustments while human workers focus on high-value activities. Digital twins, powered by AI, simulate production scenarios before physical implementation, and new as-a-service-based business models emerge from AI-driven insights. Organizations at this level frequently achieve significant reductions in time-to-market through AI-enhanced digital simulation and testing. At this advanced stage, AI readiness reaches full maturity with domain-specific foundation models trained on proprietary manufacturing data, autonomous systems augmented with generative AI for complex decision support, and comprehensive AI governance frameworks. Organizations deploy AI agents that can define project elements and communicate tasks simultaneously to multiple acting AI agents, enabling parallel processing with minimal interpretation errors. Advanced applications include AI systems that explain their recommendations in natural language, synthetic data generation for training specialized models, and AI-driven business model innovation such as product-as-a-service offerings. The convergence of AI and operational technology creates autonomous manufacturing environments where human expertise is amplified rather than replaced.

Building Effective AI-Ready Data Governance

Creating a robust data governance framework for industrial AI requires addressing several critical dimensions simultaneously. Organizations must first establish clear data ownership and stewardship roles, ensuring that someone is accountable for data quality and integrity across all operational systems. This is particularly crucial since AI models are only as good as the data they are trained on. This includes implementing standardized data formats and validation processes that ensure AI models can reliably consume data across facilities and equipment types.

Equally important is developing metadata management capabilities that preserve context as data moves through various systems and AI applications. Manufacturing data without proper context—understanding what equipment generated the data, under what conditions, and how it relates to other process variables—loses much of its analytical and AI training value. Successful AI-ready governance frameworks also include access controls that balance data democratization with security requirements, ensuring AI systems can access necessary data while protecting intellectual property and maintaining operational security.

The Path Forward: Building Your AI-Ready Foundation

The window for establishing competitive advantage through AI in manufacturing is narrowing rapidly. Organizations that delay building proper industrial data foundations risk being left behind by competitors who recognize that AI success requires systematic preparation rather than rushed implementation. The three-stage maturity model provides a clear roadmap: start with digitizing and centralizing operational data, progress to predictive analytics and AI-assisted operations, and ultimately achieve autonomous AI-driven manufacturing excellence.

The choice facing manufacturing leaders is clear: invest in building robust industrial data foundations that enable transformative AI capabilities. The alternative risks competitive obsolescence as AI-enabled competitors gain generational advantages in efficiency, quality, and innovation. The time for cautious advancement has passed—the AI-powered future of manufacturing belongs to those who act decisively today. M

About the author:

Ashtad Engineer is Worldwide Head of Manufacturing Solutions for the Auto & Manufacturing Industry Business Unit at AWS.

Dialogue: Agentic AI Moves from Insights to Action

NTT DATA’s Prasoon Saxena sees agentic AI as the next leap in manufacturing—necessary to accelerate cycles, fill labor gaps, increase global competitiveness, and reshape how humans and AI work together

Agentic AI is shifting the conversation in manufacturing from simply generating insights to executing real-world tasks. Prasoon Saxena, global president and co-lead—Products Industries at NTT DATA, Inc., explains how this new class of AI is already helping companies slash engineering cycle times, support an overstretched workforce, and unlock new levels of productivity. In this special Future of Manufacturing Project-focused Executive Dialogue, he shares why trust, governance and training are critical as manufacturers prepare for a future where humans and AI agents operate side by side.

STEVE MODKOWITZ: It seems like talk of agentic AI has overtaken conversations about AI and GenAI. Can you tell us a bit about your perspective on the market right now?

PRASOON SAXENA: Is agentic AI overtaking the conversation around the GenAI and other AI topics? The answer is absolutely, yes.

What we have noticed in the market is that over the last four or five years, the agentic AI has been in the conversation. It started with GenAI, of course, and with the introduction of ChatGPT and with Copilot, etc., it was definitely helping us with the overall insights.

But what is missing is the execution with the with the GenAI solutions, and that’s where I think the agentic AI is going to take over. Where the clients are looking for not just getting the insights, but the execution of it. So insights-to-execution is where it is heading. We are seeing several use cases that are coming out in the market where agentic AI is being used.

SM: Can you describe a smart AI platform?

PS: Specifically NTT DATA, Inc., we have developed a smart AI platform that is basically helping our clients to have an agent sitting with the humans and work jointly on the plants and on the shop floor and the supply chain and whatnot.

We’re really taking that seriously and there’s a lot of interest in the market.

SM: Can you share an agentic AI use case?

PS: We see with a company called Continental—and this is a public information that’s in the press—it is a $38 billion automotive supplier, and, if you see the engineering in the automotive, the engineering cycle is about six to eight years before you can see the products coming.

And, the Chinese have been able to release the products in two years. Why? Because they have adopted agent AI solutions. So we worked with Continental, looking at the 27 different processes in engineering, and we picked about six processes. So we looked at six processes and we deployed the agentic AI solution. And we are seeing a productivity of almost reduction from two years to six months.

So we are able to reduce the cycle time to six months, which is going to really help Continental with the overall benefit.

So yes, agentic AI is definitely taking over in the market as we see, and more and more you’ll see a lot more manufacturing companies will deploy it in their plants.

Agentic AI solutions are fairly new in the market, and we need a governance mindset while deploying the agentic AI with the humans.

SM: Will agentic AI play a role in closing workforce gaps?

PS: I do want to highlight that in the North American market—especially with the tariffs situation and whatnot, and with manufacturing coming back—there are almost half a million jobs open right now.

And we expect that to go up to almost two million plus by 2030. And, there are not even enough humans to really do the work.

So it really makes sense for the companies to deploy agentic AI along with humans to develop the products in manufacturing.

I was with a CEO of a forklift company in Tokyo last week, and he believes that 70% of the work that they do in the company is repetitive. So he is looking at deploying agentic AI solutions so that he can invest the human capital in more innovation and driving transformation.

SM: What kind of cautions would you place on agentic AI adoption?

PS: Agentic AI solutions are fairly new in the market, and we need a governance mindset while deploying the agentic AI with the humans.

Do you trust the actions the agent takes? We don’t know that yet. Right?

So there’s always “good-to-haves” and checks and balances as you deploy the agentic AIs to make sure that actions that are taken by agentic AIs are in line with what is expected of them, and gradually increase their responsibilities.

Also, our human workforce in manufacturing have to go to a change management and training themselves—how to coexist with the agentic AIs.

So it’s a journey that’s going to take some time. And it’s a trust that one has to build together, with the humans and Ais coexisting together to really delivering the services for the plants.

It’s more, right? It’s not just looking at the data, but also trusting the actions of the agentic AIs because, the way they interpret the data and the way they’re going to execute it, may not be exactly what you want them to do. And there are some mistakes that have happened in the plants that I’ve heard.

So we have to just watch that carefully, and once we have built the trust with them and then start giving them more responsibilities and start expanding into other areas as well.

Yes, there is a technology that’s available, but it’s up to us how we adopt it, how we embrace it, and how we deploy it.

SM: What is the future of agentic AI?

The answer to that is the multi-agent environment. You will see that there will be multi-agent environment that is going to get deployed in the plants now, or just in the manufacturing overall in different processes. Whether it is inventory balancing, production planning, you can have two agents talking to each other and getting the work done.

SM: Can you give an example of a multi-agent use case?

PS: Just as a case in point, we are working with a large automotive OEM.

A little bit of a background to that is that there’s an individual. His name is John, right? His real name is not John. I’m just making up the name.

We name this project as a digital John. And the real John is going to retire in two years, and he has immense knowledge about a plant in Kentucky, but nothing is documented well.

So we’re using different techniques, AI and whatnot, to capture the knowledge and document it.

And then, we took one of the lines in the production plant and deployed agentic AI.

So, one, we captured the knowledge from John as he is retiring. And, two, is that we use the knowledge to automate one of the assembly lines.

And we are seeing over $2 million in savings by deploying agentic AI. So that’s really driving the productivity with this particular OEM.

There is a lot of interesting stuff that’s happening in the agentic AI space. The future is really bright as I can see with this technology.

A lot of manufacturers are still lacking the basics: having the right data governance structure, capturing the right information and whatnot.

SM: Can you talk about smart AI solutions for manufacturing?

PS: For smart AI, we have partnered with OpenAI to build solutions. So now what we are doing is we are basically taking it to each of the industries. Like in manufacturing, we are taking the smart AI solutions and looking at each of the business processes, and we are deploying the AI agents in first the horizontal, like the procurement services, the indirect procurement, the supply chain, etc., now and then taking it to the assembly line and the production plants.

We’re making the agentic AIs really learn from being deployed there and then driving the automation.

SM: How will humans and agentic AI coexist?

PS: Within North America, we will continue to have challenges with the trained workforce.

We have two issues. One, we don’t have enough labor to work in the plants. And the second is the training of the workforce itself with the technology. We have to train our workforce to be ready to work with the technologies like agentic AI.

So we have some work to do as manufacturers to bring our workforce up to be ready for the future.

And then you train them. You go through a change management process, so that they are comfortable working with the agentic AIs.

So, yes, there is a technology that’s available, but it’s up to us how we adopt it, how we embrace it, and how we deploy it.

The important message from my perspective is that AI is pervasive, and it’s here to stay. Embrace it.

SM: What role does infrastructure and data play in this?

PS: The second part of that is around the infrastructure and data.

Within just north American market, what I have seen is that a lot of manufacturers are still lacking the basics: having the right data governance structure, capturing the right information and whatnot. Connectivity is an issue, for example. So, there is an issue around the foundational block. And, I think, that’s a real opportunity for the manufacturers to build a solid infrastructure as they deploy the agentic AI and what not.

So one is the training and the change management with the humans. The second is the foundational block, which is making sure that you have enough of a strong foundation to really deploy these type of solutions. And at NTT DATA we have all the capabilities to help you—not just the foundational blocks but also rolling out the agentic AI or any AI solutions in the future.

SM: If you could leave the audience with one final important takeaway about AI, what would it be?

PS: The important message from my perspective is that AI is pervasive, and it’s here to stay. Embrace it. Otherwise we will be all behind. And as we have seen, especially in the automotive sector, the Chinese have really taken the lead. So I don’t think we have enough time now. And we have to accelerate the adoption of AI solutions. M

Portions of this interview have been edited for clarity and length.

About the author:

Steven Moskowitz, Ph.D., is senior director at the Manufacturing Leadership Council.

Welcome New Members of the MLC October 2025

Introducing the latest new members to the Manufacturing Leadership Council

Learn more about MLC membership.

Rodrigo Cambiaghi

Managing Partner, EY Digital Operations

EY

https://www.ey.com/en_us

![]()

https://www.linkedin.com/in/rodrigocambiaghi/

AI Turns Data Governance into a Middle Market Advantage

What was once expensive and burdensome is now practical, affordable, and essential.

TAKEAWAYS:

● AI is democratizing data governance, making it practical and impactful for middle market manufacturers.

● Clean data isn’t just technical hygiene—it’s a strategic advantage that drives operational efficiency and trust.

● Modern AI-powered platforms simplify governance, reduce errors, and bring governance directly into daily operations.

AI is at the center of the modern manufacturing conversation. From predictive maintenance to generative design, manufacturers are racing to capture its potential. But without high-quality data, AI falls flat.

AI can only be as good as the data that powers it. If that data is messy, incomplete, or siloed, the results will be inaccurate—or even misleading. Poor data quality costs organizations an average of $12.9 million per year.

For years, governance was out of reach for most middle market manufacturers. It required major IT investments, specialized skills, and years of effort. It was often treated as compliance—not a competitive lever. Thanks to AI, that’s changed. Governance is now faster, easier, and cost-effective, transforming from a back-office burden to a frontline advantage.

Three Catalysts Driving Adoption

AI-Powered Discovery

Agentic AI workflow solutions can automate the heavy lifting of creating data pipelines, applying transformations, and documenting processes. What once required weeks of coding can now be done in days, freeing teams to focus on applying insights instead of preparing data.

Lower Barriers to Entry

AI-driven platforms uncover inconsistencies, reconcile mismatched records, and track data lineage with minimal manual intervention. Middle market manufacturers—who once lacked the resources for governance—can now access enterprise-grade tools at a fraction of the cost. The result: faster results, lower costs, and fewer errors.

Expanded Awareness

Governance is no longer confined to IT. Operations leaders and plant managers recognize that poor data leads to poor outcomes, whether in forecasting, scheduling, or supply chain performance. Clean data builds trust, accelerates decisions, and unites teams around a single version of the truth.

Why Middle Market Manufacturers Should Care Now

Margins are thinner, teams are leaner, and inefficiencies hurt more in the middle market. Bad data drives operational waste: duplicate work, flawed decisions, and costly rework. Historically, manufacturers filled gaps with intuition or tribal knowledge, but that no longer works in today’s fast-moving environment.

Governance enables fact-based decision-making. With governed data, manufacturers can pinpoint root causes, adapt to supply chain disruptions, and optimize production in real time. Without it, they risk reacting too late or misdiagnosing problems altogether.

Governance is now faster, easier, and cost-effective, transforming from a back-office burden to a frontline advantage.

AI only raises the stakes. Gartner predicts 60% of AI initiatives will fail to deliver business value by 2027. Other studies put that number closer to 80–85%. For middle market manufacturers, governance isn’t just IT hygiene—it’s a business-critical strategy.

From Theory to Practice: AI Makes It Work

Traditional governance stalled because it was slow, costly, and disconnected from daily work. AI has changed that. By embedding governance into workflows, AI allows manufacturers to manage data quality in real time.

Instead of hand-building scripts, AI enables teams to define desired outcomes and generate pipelines automatically. Errors are flagged early, and documentation is created instantly. Governance shifts from “fixing after the fact” to enabling usable, trusted data from the start.

But tools alone aren’t enough. The real payoff comes when governance is paired with process change. Clean data must actively inform decision-making. When governance and process improvements move together, companies unlock measurable gains in speed, quality, and cost.

Unlocking Fast Wins and Cultural Change

Many leaders assume governance takes years to show value. In reality, small pilots deliver impact fast. As soon as teams begin using governed data, they see fewer errors, shorter decision cycles, and more confidence in outcomes.

With governed data, manufacturers can pinpoint root causes, adapt to supply chain disruptions, and optimize production in real time.

The bigger challenge is culture. After years of unreliable systems, skepticism runs deep. Overcoming that requires leadership. Executives must position data as a strategic lever, not a side project, and build trust through quick wins. A pilot that reduces downtime or shortens reporting cycles can spark belief across the organization.

Trust builds momentum. With each success, adoption grows, skepticism fades, and governance becomes how the business works—not an IT mandate, but a cultural norm.

A Call to Action

For middle market manufacturers, this isn’t just a technology trend—it’s a turning point. Data governance isn’t a barrier; it’s a business enabler. It reduces waste, accelerates insights, and lays the foundation for AI-powered resilience.

The technology is ready. The tools are affordable. The ROI is proven. What’s left is a choice: keep patching data problems, or fix them for good.

It’s time to stop duct-taping data issues. Let’s build a foundation that enables AI, accelerates decisions, and drives performance. Start small. Prove value. Build trust. And position your business to lead with clean, governed data. M

About the authors:

Annemieke De Groot is Data & AI Governance Lead at West Monroe.

Jeff Pehler is Managing Director, Consumer & Industrial Products at West Monroe.

Scott Saueressig is Senior Manager at West Monroe.

AI with Open and Scaled Data Sharing in Semiconductor Manufacturing

Robust data sharing in a collaborative data ecosystem (CDE) scales qualified data and widens access to untapped operational advantages for manufacturers.

TAKEAWAYS:

● Smart Manufacturing leverages large volumes of industry-qualified data to orchestrate applications comprehensively at multiple operational scales, but data access remains a barrier.

● Data sharing combined with Data-first site strategies recognize the need to first process raw operational data into AI-ready data for any AI, machine learning, or digital twin application to work.

● Manufacturers and engaged factory staff can agree and execute on cross-site data processes, guardrails, and shared workforce training for qualified, scalable, and trusted data sharing.

Smart Manufacturing (SM) defines the orchestration of advanced digital technologies used to construct scaled software systems. Data are used in artificial intelligence (AI), machine learning (ML), and digital twin (DT) applications to enable data-driven insights and decision-making, automation/autonomy, and scaled interoperability within and across physical and human control and management systems. This results in improved products, reduced energy and material usage, and enhanced productivity, responsiveness, and resilience in manufacturing operations, enterprises, and supply chains. The workforce becomes more effective, productive, and engaged.

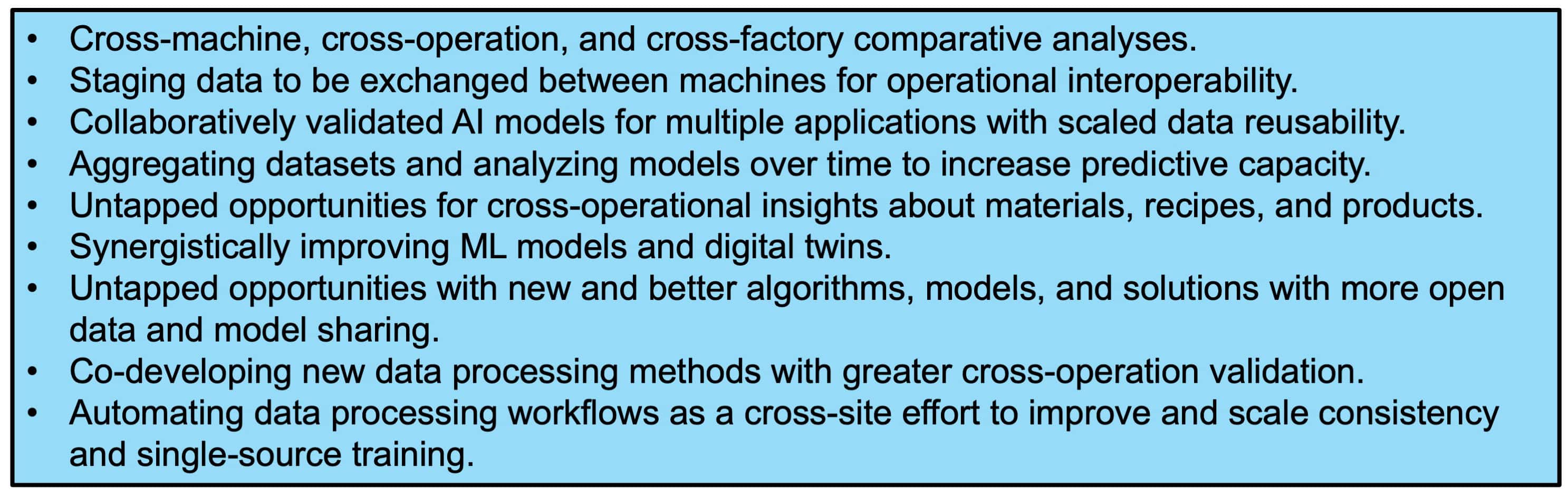

Economic opportunities and barriers with data sharing have been explained in studies conducted since 2020.3 The potential for substantial operational value is significant (Table 1), but data access remains a barrier. Processing of manufacturing data is often not prioritized, and when it is, it’s rarely done well or consistently across applications. It remains largely closed for application, tool, and training development. Like mined minerals, raw operational data hold little value in manufacturing until the data are qualified, refined, concentrated, and processed in sufficient quantities.

Smart manufacturing that orchestrates and scales AI/ML/DT applications leverages large volumes of factory data to create AI-ready data, which are consistently and persistently contextualized, qualified, prepared, and engineered for various applications at multiple operational scales. Consistency in data processing is a key objective. A Data-first strategy emphasizes the need to convert raw operational data into AI-Ready data for any application to be effective. All manufacturers—small, medium, and large—have valuable data and contribute to a broader manufacturing ecosystem. We refer to collaborative factory/company sites that share data as a collaborative data ecosystem (CDE).

Table 1: Industry-Defined Points of Economic Value for Smart Manufacturing Collaborative Data Ecosystems (CDEs) that can Scale Data and AI

A Workshop to Benchmark the Value of Data Sharing

A workshop sponsored by the National Science Foundation (NSF) and supported by the National Institute of Standards and Technology (NIST) titled “Artificial Intelligence with Open and Scaled Data Sharing in the Semiconductor Industry,” aimed to benchmark the potential of scaled data sharing while addressing significant barriers. It brought together 32 factory engineers and data scientists from 12 semiconductor manufacturers. Additionally, 27 participants, including data scientists from academic institutions across the country, industry experts on information technology (IT) and operational technology (OT) infrastructure, specialists in price analysis and equipment building, and government leaders in advanced manufacturing contributed by challenging, proposing, and reviewing paths forward.

The workshop focused on existing technologies (no R&D) and benchmarking near-term benefits. A Seagate/UCLA project team benchmarked the economic value points related to the data processing and engineering necessary to build a virtual metrology application for enhancing productivity. Wafer production datasets from five etch machines at different sites, used for similar operations across different products, were qualified, categorized, prepared, and engineered into AI-ready data. A common data information model was developed for all five machine tools using the CESMII SM ProfileTM4 to encode the data model in a digitally standard form. Data information modeling was also demonstrated on chemical mechanical planarization (CMP) machines at three company sites.

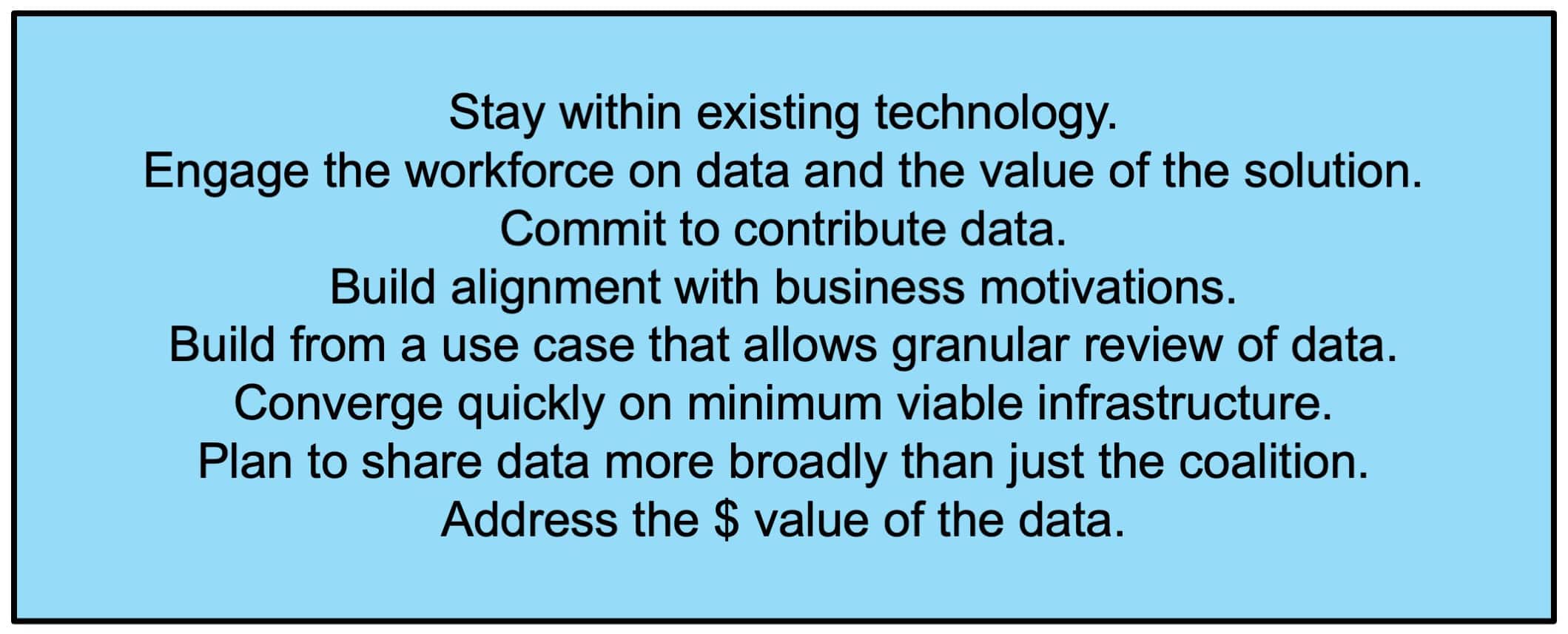

Executing on Consistent Data Processing as a CDE

Executing as a CDE required a commitment to a governance structure that ensured trust in site qualifications, consistent data processes, security, IP protections, and model validations. Factories and companies needed to collaborate on data preparation and build AI/ML models while maintaining autonomy over their products and applications. Factory site data engineers and scientists had to work together on solutions. Governance was supported by a “mindset” that challenged conventional thinking. Adhering to eight execution principles was critical for sustaining the ecosystem effort (Table 2).

Table 2: Eight Key Execution Principles for Industry Data Sharing

Business Value Basis for the Ecosystem to Form

This coalition established the CDE as a “market-driven, business entity.” This study demonstrates a CDE that is a bottom-up, business-focused entity for factories to increase the value of site data in collaboration with other factories and companies. It creates new business, revenue, and service opportunities based on data value and the benefits of jointly preparing data and building models. Interest in the CDE began with a viable business opportunity. Identifying specific operational benefits was the crucial next step. The execution principles propelled the CDE forward.

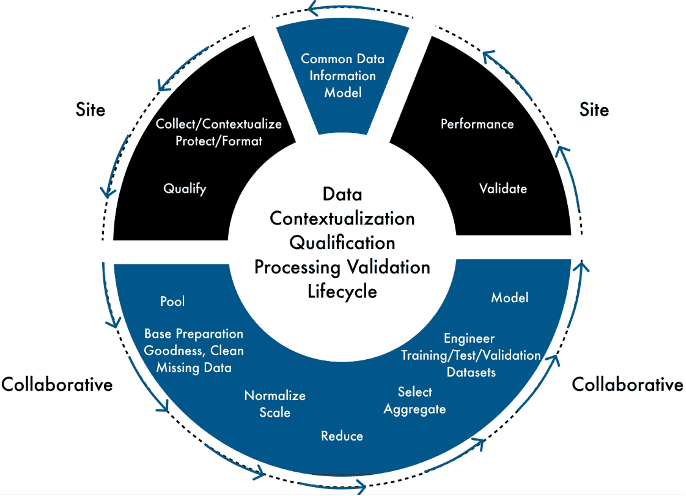

An Overall Finding about Data Processing Consistency

We highlight the key finding that data preparation and refinement consistency are best achieved through a workflow of repeatable data processing steps, which include (counterclockwise): (1) eliminating contextual and formatting inconsistencies with a common data information model as a collaborative step; (2) ensuring consistent qualification (operational quality) and formatting (including categorization of key operational features) as on-site steps; (3) maximizing pooled data processing through a workflow of collaborative steps; and (4) site validation and deployment with shared but individually applied solutions and methods (Figure 1).

Maximizing collaboration (shown in blue) while minimizing inconsistencies from site steps (in black) was essential for data processing consistency. The figure also emphasizes that consistency involves consistently selecting and applying methods for each step. Entry into the cycle is the common data information model.

Figure 1. Consistent and Collaborative Data Refinement and Model Building

Key Benchmark Performance Findings

SM and AI/ML/DT systems can be implemented in a cost-effective manner. The CDE process was benchmarked against processing data and building the ML model independently for each machine:

- Batch run datasets from different sites were combined to create a qualified, consistently processed, and richer 100,000 batch run super dataset.

- ML model performance using aggregated data for predicting wafer flatness pass or fail was 30 percent to 50 percent better than performance with siloed training.

Pooled processing could achieve 3x cost savings in staffing and avoid 4 full-time equivalents (FTEs) in increased headcount across all sites.

Factory-floor staff from various sites collaborated to create a common data information model for all machines, reflecting a shared expert understanding of machine operation. Building the common data information model facilitated co-developed methods to consistently qualify data, protect IP, categorize data, address security, and share training. Workforce training should ultimately be guided by the business value of data sharing. However, initial on-the-job training programs on data processing are needed to drive the value of data for AI/ML/DT applications.

Every success in this demonstration project was driven by the value and availability of consistently processed data. Focusing on shared data processing and engineering facilitated algorithm development and validation. Better data could be produced without increasing headcount or service requirements by pooling factory site data. If data are qualified, consistent, scalable, and trusted, cross-operational advantages follow. Cross-site, cross-factory, and cross-company data sharing is doable. A sufficient intersection of individual values and ways to address risks and barriers can be found. There is a line of sight to shared data inventories categorized with process conditions. M

About the Authors

Sthitie Bom is Vice President of Factory Data, Analytics, and Applications at Seagate.

Jim Davis is UCLA Vice Provost IT (CIO/CTO) Emeritus.

Notes:

1. The content in this article is based upon work supported by the National Science Foundation (NSF) under Grant 2334590 and further supported by the National Institute of Standards and Technology (NIST). Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of NSF or NIST.

2. The detailed NSF sponsored/NIST supported Workshop report is in process to be published; Workshop Organizing Committee: Sthitie Bom, Seagate Technology (co-chair); Jim Davis, UCLA (co-chair); Said Jahanmir, Office of Advanced Manufacturing, NIST; Bruce Kramer, Office of Advanced Manufacturing, NIST; Don Ufford, Office of Advanced Manufacturing (when work was done), NIST; Greg Vogl, Engineering Laboratory, NIST.

3. Towards Resilient Manufacturing Ecosystems through Artificial Intelligence – Symposium Report, NIST Advanced Manufacturing Series, NIST AMS 100-47, September 2022; Options for a National Plan for Smart Manufacturing; National Academies of Science, Engineering and Medicine, Consensus Study Report, 2024.