Prioritizing digital projects to make early gains can create a path to improved digital maturity. By Dennis McRae, Penny Wand, and David McGraw

Business leaders have made significant investments in numerous systems and applications over the last 20 years. You may know them best by their acronyms: enterprise resource planning (ERP); quality management system (QMS); manufacturing execution system (MES); supply chain management (SCM); customer relationship management (CRM). Each is layered atop one another and across their organizations’ value chains – from customer experience, to design and engineering, to supply chain and procurement, warehousing and distribution.

The issue is that data collected by these systems remains siloed. Data gathered by the back-office team isn’t integrated with data gathered on the shop floor, and neither is integrated with data on customer behavior. Sometimes individual departments don’t even know the other has systems in place to gather data.

We’ve seen the consequences. A company, for instance, is plagued with quality issues that are leaking into the field. But despite a wealth of available data, company leaders can’t figure out the root of the problem. The information they have is raw, chaotic, spread all over the place, and ungoverned; in effect, it can’t do its job. If the data was integrated across the organization – if it talked with other data – the company could do more than simply react to problems after the fact; they could predict those problems ahead of time, drive insights, and even create new products.

Manufacturers have the data and the necessary technology. But the payoff is greater when manufacturers democratize data by deploying self-service capabilities that make the right data available to the right person at the right time.

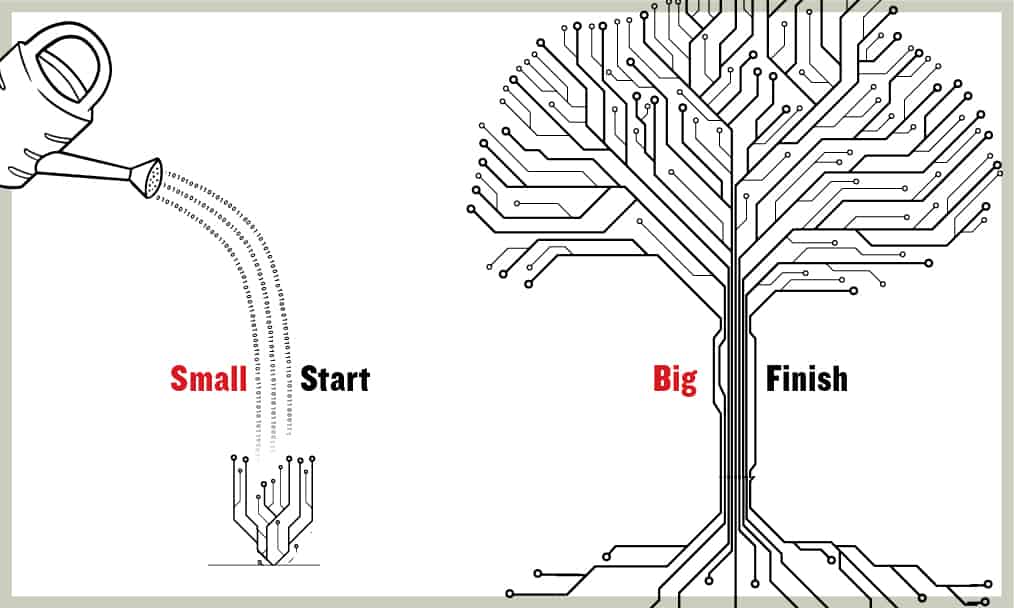

The Approach: To Do Big Things, You’ve Got to Start Small

Given the sheer number of data sources, companies tend to struggle under the weight of endless options. Should they start on the supply chain side? With product performance? Customer experience?

In the end, they either take on too many digital projects (and make minimal progress) or go with what’s easiest to implement (instead of what will drive the most value). This is true of large companies, but it’s also true for middle-market companies that may be less digitally mature despite growth by acquisition, having added new and disparate data sources along the way.

Each time business leaders move through this cycle, they will adopt new skills, processes, and technical know-how that will drive future success.

That’s why the suggested approach starts small and delivers value fast. Rather than thinking in terms of a vast roadmap or engineering insights, we advise executives to begin with the data required to drive value-based use cases, working side by side with experts on their own teams to understand where problem areas may reside, who has access, and what it all means. This process, which we refer to as rapid insights1, leads to clarity about where value can be created in specific areas of the organization.

Key stakeholders can then decide which projects to prioritize, what data is required to drive use cases for those projects, and what governance and platforms might need to be put into place. Within weeks, the initiative can be tested and iterated on its path toward democratization.

We watched this play out recently with a large chemical manufacturer. The company had implemented an ERP solution in conjunction with its parent company but given the system’s management structure and operations, its end users had to open a support ticket each time they sought access to new data or wanted to create reports. As a result, reports took days to produce and the company’s business teams were at risk of using outdated insights in developing them. The process became even more costly in time and efficiency because once users acquired a data extract from the ERP system, significant manual manipulation of said data was needed to prepare reports.

Like many manufacturers, the organization needed to remain attuned to real-time changes in customer needs, competitor activities, and supply chain capabilities. The stifling nature of producing reports hindered them in these respects: They could not make proactive and timely sales, marketing, and production decisions.

Analytics and reporting initiatives historically require multiyear development cycles. But by focusing attention on this area, gathering insights in quick sprints, and deploying a rapid analytics platform (RAP)2 to automate data pipeline management, the chemical manufacturer was able to launch the solution in just six months. By combining the RAP with cloud data from the ERP, and applying data visualization tools, business users can have a self-service reporting solution that draws on real-time data from the company’s ERP.

Understanding the Data Maturity Cycle

That example not only demonstrates the efficacy of a targeted approach but also highlights some of the key stages along the path to data maturity. As more functionality – e.g., self-service reporting tools – is implemented on a given data platform, the organization gains greater competitive advantages. Knowing what these stages are and what they entail can be critical for manufacturing executives who want to better understand where they’re at and where to go next.

- Report: What happened? Traditional tabular or Excel operational reports typically answer the question “what happened?” The practical challenges with data quality and governance are well-documented, but a larger underlying issue often slowing progress is low data literacy within the organization to identify the data-driven root cause. The organization might feel intimidated, or not know where to begin, or have a situation in which the IT department and the factory floor speak different languages using different systems. Starting with a cross-silo visual investigation of “what is” and “what happened” increases the individual and organizational curiosity and ultimately literacy. The old adage of a picture being worth a thousand words applies. Using a visual approach accelerates understanding through a three-dimensional view of data using drill-through capabilities. Once reaching this stage, organizations then want to know why something happened and want to identify and address the data-related issues.

- Analyze: Why did it happen? Being able to find a root cause means having access to data in real-time. End users working at the aforementioned chemical company needed to access their ERP data and quickly pull and utilize information of interest. A self-service BI platform, drawing on clean, standardized data, can enable this capability. It’s also the first step toward data democratization and more advanced analytics, again using visual techniques.

- Predict: What will happen next? It’s one thing to know what happened; it’s another to predict what will happen. That’s where predictive analytics come in. Given the statistical nature of these analytics, some end users might be able to build their own models. Others in the organization might draw on tools that simplify such modeling.

- Optimize: What should happen? Managed machine learning and prescriptive analytics can help business leaders actually make decisions instead of merely acknowledging that a trend line is directionally correct. For instance, accurate customer demand forecasts might spur leaders to reassess their procurement purchases of raw materials.

- Cognitive: Full AI. In other words, new insights and recommendation actions are generated autonomously by computers.

Manufacturers that begin with a clear understanding of where their data comes from and where it can create value will be able to monetize this data in other ways.

Technology exists for all forms of data analytics. What’s typically missing, especially with AI/ML capabilities, are the processes, governance, production models, and overall data literacy necessary to achieve the intended value. This means addressing use case prioritization and tackling governance one area at a time based on highest importance and the likeliest chance of success based on the currently available data. It requires tackling data quality, competency, and overall literacy. It means making data everyone’s domain.

Starting with bite-sized chunks reduces complexity and stress inherent in progressing through this cycle; each time business leaders move through it, they will adopt new skills, processes, and technical know-how that will drive future success.

Leveraging Data to Create New Revenue Models

We often begin our conversations with business leaders about a problem area they hope data can help solve. But part of what makes these rapid insights so successful is that leaders also emerge with insights about their data they didn’t expect.

While these leaders may start with small projects, it doesn’t mean they shouldn’t seize new opportunities presented by integrated data analytics. In the manufacturing industry, these opportunities are beginning to take the form of product-as-a-service (PaaS) offerings.

Democratized data can empower your people to drive new business insights, value creation, and even new revenue models.

Michelin, for example, provides a perfect case study in how to build a PaaS – and how not to3.

That company’s first attempt at becoming a service provider began in 2000. Instead of simply producing and selling tires, Michelin sought to create a PaaS model in which large vehicle fleet operators, by paying a monthly fee, could share the risk in tire replacement with the company. The initiative was a resounding failure, in large part because Michelin failed to communicate the value of its added service (i.e. better-maintained tires last longer and provide better fuel efficiency).

Cut to 2013, when senior managers at Michelin created a separate division, Michelin Solutions, to leverage IoT and other information systems to develop services for commercial vehicles. That solution, EFFIFUEL, used data from vehicle sensors to track fuel consumption, tire pressure, temperature, speed, and location. After processing the resulting data, experts provided recommendations in economical driving techniques.

This time, with integrated data analytics that demonstrated the value of its PaaS, Michelin’s endeavor was a success both for the company and its customers.

Manufacturers that begin with a clear understanding of where their data comes from and where it can create value will be able to monetize this data in other ways. That might result in a PaaS similar to Michelin, a better-tailored customer experience, selling data itself, or engineering entirely new products.

Half the Battle Has Been Won – Now It’s Time to Connect the Dots

As new systems (and acronyms) continued to emerge over the past two decades, the technology surpassed the governance, while the sheer amount of data those systems produced surpassed business leaders’ ability to actually use it.

But getting data out of siloes – where it can be democratized across an organization, standardized, and accessible in real-time by the end users who need it – is not a matter of the technologies themselves. It’s about the people and processes who operate those technologies. Getting data to talk to other data starts by people talking to other people, working side by side to prioritize projects that generate value, and doing so in weeks and months – not years.

In other words, manufacturers don’t need more acronyms and more systems. They need to empower their people to leverage data for value creation. This means continuously improving on how the data is used from basic BI to predictive analytics and then rewarding the organization for driving continuous improvement around how data is used to create value for the business and its customers.

The data imperative is now. Democratize your organization’s data in a self-service environment by making data accessible and empower your people to house and leverage governed data to drive new business insights, value creation, and even new revenue models. M Footnotes

1. https://www.westmonroepartners.com/Insights/Newsletters/Tech-Data-Driven-Rapid-Insights

2. https://www.westmonroepartners.com/en/Insights/Solution-Briefs/Rapid-Analytics-Platform

3. https://digital.hbs.edu/platform-rctom/submission/michelin-tires-as-a-service/